mirror of

https://github.com/AbdBarho/stable-diffusion-webui-docker.git

synced 2025-10-27 16:24:26 -04:00

Compare commits

20 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

59892da866 | ||

|

|

fceb83c2b0 | ||

|

|

17b01a7627 | ||

|

|

b96d7c30d0 | ||

|

|

aae83bb8f2 | ||

|

|

10763a8f61 | ||

|

|

64e8f093d2 | ||

|

|

3e0a137c23 | ||

|

|

a1c16942ff | ||

|

|

6ae3473214 | ||

|

|

5d731cb43c | ||

|

|

c1fa2f1457 | ||

|

|

d8cfdd3af5 | ||

|

|

03d12cbcd9 | ||

|

|

2e76b6c4e7 | ||

|

|

5eae2076ce | ||

|

|

725e1f39ba | ||

|

|

ab651fe0d7 | ||

|

|

f76f8d4671 | ||

|

|

e32a48f42a |

11

.github/ISSUE_TEMPLATE/bug.md

vendored

11

.github/ISSUE_TEMPLATE/bug.md

vendored

@@ -7,13 +7,17 @@ assignees: ''

|

|||||||

|

|

||||||

---

|

---

|

||||||

|

|

||||||

**Has this issue been opened before? Check the [FAQ](https://github.com/AbdBarho/stable-diffusion-webui-docker/wiki/Main), the [issues](https://github.com/AbdBarho/stable-diffusion-webui-docker/issues?q=is%3Aissue) and in [the issues in the WebUI repo](https://github.com/hlky/stable-diffusion-webui)**

|

**Has this issue been opened before? Check the [FAQ](https://github.com/AbdBarho/stable-diffusion-webui-docker/wiki/Main), the [issues](https://github.com/AbdBarho/stable-diffusion-webui-docker/issues?q=is%3Aissue)**

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

**Describe the bug**

|

**Describe the bug**

|

||||||

|

|

||||||

|

|

||||||

|

**Which UI**

|

||||||

|

|

||||||

|

hlky or auto or auto-cpu or lstein?

|

||||||

|

|

||||||

**Steps to Reproduce**

|

**Steps to Reproduce**

|

||||||

1. Go to '...'

|

1. Go to '...'

|

||||||

2. Click on '....'

|

2. Click on '....'

|

||||||

@@ -22,8 +26,11 @@ assignees: ''

|

|||||||

|

|

||||||

**Hardware / Software:**

|

**Hardware / Software:**

|

||||||

- OS: [e.g. Windows / Ubuntu and version]

|

- OS: [e.g. Windows / Ubuntu and version]

|

||||||

|

- RAM:

|

||||||

- GPU: [Nvidia 1660 / No GPU]

|

- GPU: [Nvidia 1660 / No GPU]

|

||||||

- Version [e.g. 22]

|

- VRAM:

|

||||||

|

- Docker Version, Docker compose version

|

||||||

|

- Release version [e.g. 1.0.1]

|

||||||

|

|

||||||

**Additional context**

|

**Additional context**

|

||||||

Any other context about the problem here. If applicable, add screenshots to help explain your problem.

|

Any other context about the problem here. If applicable, add screenshots to help explain your problem.

|

||||||

|

|||||||

29

.github/workflows/docker.yml

vendored

29

.github/workflows/docker.yml

vendored

@@ -1,24 +1,19 @@

|

|||||||

name: Build Image

|

name: Build Images

|

||||||

|

|

||||||

on: [push]

|

on: [push]

|

||||||

|

|

||||||

# TODO: how to cache intermediate images?

|

|

||||||

jobs:

|

jobs:

|

||||||

build_hlky:

|

build:

|

||||||

|

strategy:

|

||||||

|

matrix:

|

||||||

|

profile:

|

||||||

|

- auto

|

||||||

|

- hlky

|

||||||

|

- lstein

|

||||||

|

- download

|

||||||

runs-on: ubuntu-latest

|

runs-on: ubuntu-latest

|

||||||

name: hlky

|

name: ${{ matrix.profile }}

|

||||||

steps:

|

steps:

|

||||||

- uses: actions/checkout@v3

|

- uses: actions/checkout@v3

|

||||||

- run: docker compose build --progress plain

|

# better caching?

|

||||||

build_AUTOMATIC1111:

|

- run: docker compose --profile ${{ matrix.profile }} build --progress plain

|

||||||

runs-on: ubuntu-latest

|

|

||||||

name: AUTOMATIC1111

|

|

||||||

steps:

|

|

||||||

- uses: actions/checkout@v3

|

|

||||||

- run: cd AUTOMATIC1111 && docker compose build --progress plain

|

|

||||||

build_lstein:

|

|

||||||

runs-on: ubuntu-latest

|

|

||||||

name: lstein

|

|

||||||

steps:

|

|

||||||

- uses: actions/checkout@v3

|

|

||||||

- run: cd lstein && docker compose build --progress plain

|

|

||||||

|

|||||||

20

.github/workflows/stale.yml

vendored

Normal file

20

.github/workflows/stale.yml

vendored

Normal file

@@ -0,0 +1,20 @@

|

|||||||

|

name: 'Close stale issues and PRs'

|

||||||

|

on:

|

||||||

|

schedule:

|

||||||

|

- cron: '30 1 * * *'

|

||||||

|

|

||||||

|

jobs:

|

||||||

|

stale:

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

steps:

|

||||||

|

- uses: actions/stale@v5

|

||||||

|

with:

|

||||||

|

only-labels: awaiting-response

|

||||||

|

stale-issue-message: This issue is stale because it has been open 14 days with no activity. Remove stale label or comment or this will be closed in 7 days.

|

||||||

|

stale-pr-message: This PR is stale because it has been open 14 days with no activity. Remove stale label or comment or this will be closed in 7 days.

|

||||||

|

close-issue-message: This issue was closed because it has been stalled for 7 days with no activity.

|

||||||

|

close-pr-message: This PR was closed because it has been stalled for 7 days with no activity.

|

||||||

|

days-before-issue-stale: 14

|

||||||

|

days-before-pr-stale: 14

|

||||||

|

days-before-issue-close: 7

|

||||||

|

days-before-pr-close: 7

|

||||||

@@ -1,14 +0,0 @@

|

|||||||

# WebUI for AUTOMATIC1111

|

|

||||||

|

|

||||||

The WebUI of [AUTOMATIC1111/stable-diffusion-webui](https://github.com/AUTOMATIC1111/stable-diffusion-webui) as docker container!

|

|

||||||

|

|

||||||

## Setup

|

|

||||||

|

|

||||||

Clone this repo, download the `model.ckpt` and `GFPGANv1.3.pth` and put into the `models` folder as mentioned in [the main README](../README.md), then run

|

|

||||||

|

|

||||||

```

|

|

||||||

cd AUTOMATIC1111

|

|

||||||

docker compose up --build

|

|

||||||

```

|

|

||||||

|

|

||||||

You can change the cli parameters in `AUTOMATIC1111/docker-compose.yml`. The full list of cil parameters can be found [here](https://github.com/AUTOMATIC1111/stable-diffusion-webui/blob/master/modules/shared.py)

|

|

||||||

@@ -1,4 +0,0 @@

|

|||||||

{

|

|

||||||

"outdir_samples": "/output",

|

|

||||||

"font": "DejaVuSans.ttf"

|

|

||||||

}

|

|

||||||

@@ -1,20 +0,0 @@

|

|||||||

version: '3.9'

|

|

||||||

|

|

||||||

services:

|

|

||||||

model:

|

|

||||||

build: .

|

|

||||||

ports:

|

|

||||||

- "7860:7860"

|

|

||||||

volumes:

|

|

||||||

- ../cache:/cache

|

|

||||||

- ../output:/output

|

|

||||||

- ../models:/models

|

|

||||||

environment:

|

|

||||||

- CLI_ARGS=--medvram --opt-split-attention

|

|

||||||

deploy:

|

|

||||||

resources:

|

|

||||||

reservations:

|

|

||||||

devices:

|

|

||||||

- driver: nvidia

|

|

||||||

device_ids: ['0']

|

|

||||||

capabilities: [gpu]

|

|

||||||

91

README.md

91

README.md

@@ -2,84 +2,66 @@

|

|||||||

|

|

||||||

Run Stable Diffusion on your machine with a nice UI without any hassle!

|

Run Stable Diffusion on your machine with a nice UI without any hassle!

|

||||||

|

|

||||||

This repository provides the [WebUI](https://github.com/hlky/stable-diffusion-webui) as a docker image for easy setup and deployment.

|

This repository provides multiple UIs for you to play around with stable diffusion:

|

||||||

|

|

||||||

Now with experimental support for 2 other forks:

|

|

||||||

|

|

||||||

- [AUTOMATIC1111](./AUTOMATIC1111/) (Stable, very few bugs!)

|

|

||||||

- [lstein](./lstein/)

|

|

||||||

|

|

||||||

NOTE: big update coming up!

|

|

||||||

|

|

||||||

## Features

|

## Features

|

||||||

|

|

||||||

- Interactive UI with many features, and more on the way!

|

### AUTOMATIC1111

|

||||||

- Support for 6GB GPU cards.

|

|

||||||

- GFPGAN for face reconstruction, RealESRGAN for super-sampling.

|

|

||||||

- Experimental:

|

|

||||||

- Latent Diffusion Super Resolution

|

|

||||||

- GoBig

|

|

||||||

- GoLatent

|

|

||||||

- many more!

|

|

||||||

|

|

||||||

## Setup

|

[AUTOMATIC1111's fork](https://github.com/AUTOMATIC1111/stable-diffusion-webui) is imho the most feature rich yet elegant UI:

|

||||||

|

|

||||||

Make sure you have an **up to date** version of docker installed. Download this repo and run:

|

- Text to image, with many samplers and even negative prompts!

|

||||||

|

- Image to image, with masking, cropping, in-painting, out-painting, variations.

|

||||||

|

- GFPGAN, RealESRGAN, LDSR, CodeFormer.

|

||||||

|

- Loopback, prompt weighting, prompt matrix, X/Y plot

|

||||||

|

- Live preview of the generated images.

|

||||||

|

- Highly optimized 4GB GPU support, or even CPU only!

|

||||||

|

- [Full feature list here](https://github.com/AUTOMATIC1111/stable-diffusion-webui-feature-showcase)

|

||||||

|

|

||||||

```

|

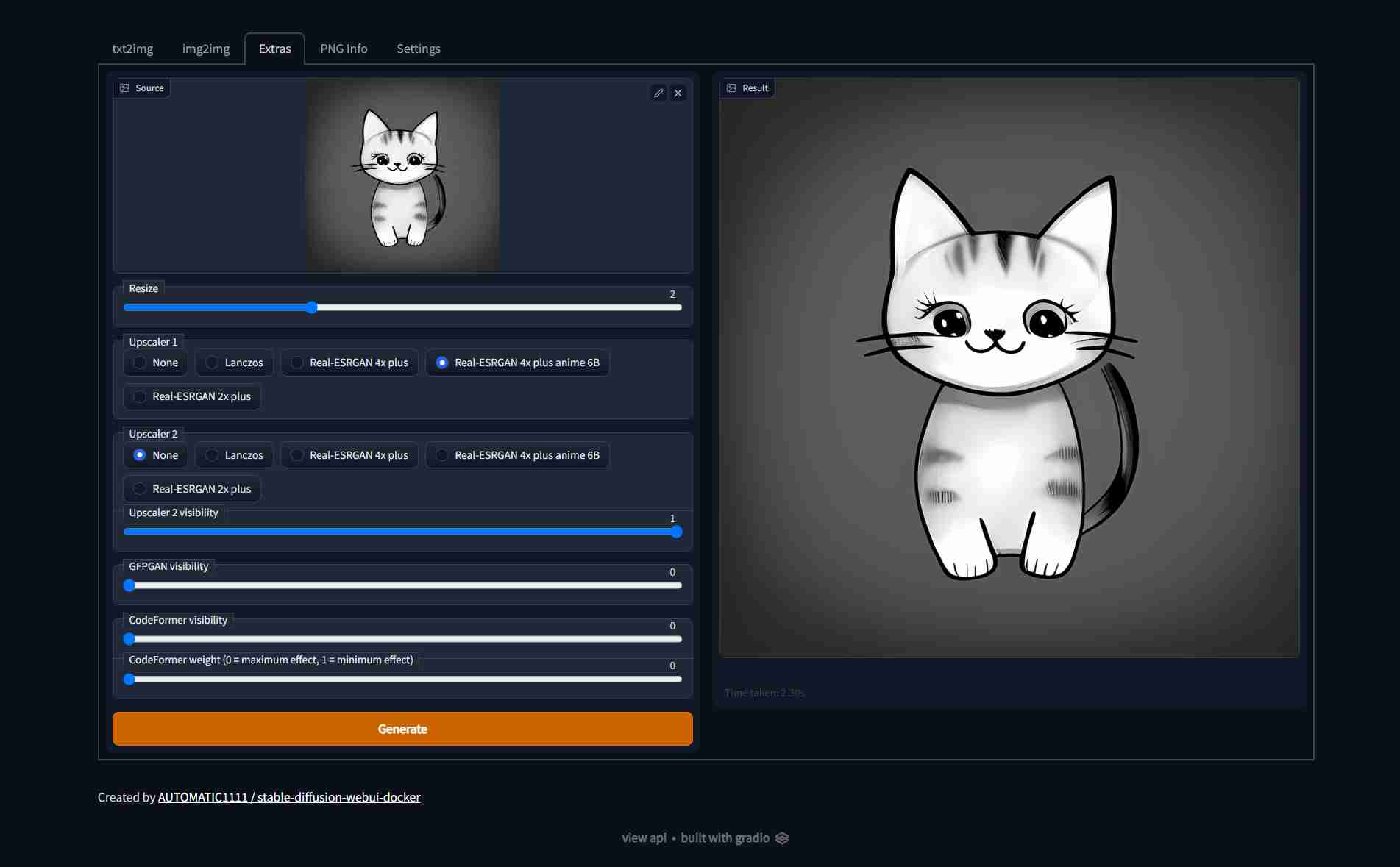

| Text to image | Image to image | Extras |

|

||||||

docker compose build

|

| ---------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------- |

|

||||||

```

|

|  |  |  |

|

||||||

|

|

||||||

you can let it build in the background while you download the different models

|

### hlky

|

||||||

|

|

||||||

- [Stable Diffusion v1.4 (4GB)](https://www.googleapis.com/storage/v1/b/aai-blog-files/o/sd-v1-4.ckpt?alt=media), rename to `model.ckpt`

|

[hlky's fork](https://github.com/hlky/stable-diffusion-webui) is one of the most popular UIs, with many features:

|

||||||

- (Optional) [GFPGANv1.3.pth (333MB)](https://github.com/TencentARC/GFPGAN/releases/download/v1.3.0/GFPGANv1.3.pth).

|

|

||||||

- (Optional) [RealESRGAN_x4plus.pth (64MB)](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth) and [RealESRGAN_x4plus_anime_6B.pth (18MB)](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth).

|

|

||||||

- (Optional) [LDSR (2GB)](https://heibox.uni-heidelberg.de/f/578df07c8fc04ffbadf3/?dl=1) and [its configuration](https://heibox.uni-heidelberg.de/f/31a76b13ea27482981b4/?dl=1), rename to `LDSR.ckpt` and `LDSR.yaml` respectively.

|

|

||||||

<!-- - (Optional) [RealESRGAN_x2plus.pth (64MB)](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.1/RealESRGAN_x2plus.pth)

|

|

||||||

- TODO: (I still need to find the RealESRGAN_x2plus_6b.pth) -->

|

|

||||||

|

|

||||||

Put all of the downloaded files in the `models` folder, it should look something like this:

|

- Text to image, with many samplers

|

||||||

|

- Image to image, with masking, cropping, in-painting, variations.

|

||||||

|

- GFPGAN, RealESRGAN, LDSR, GoBig, GoLatent

|

||||||

|

- Loopback, prompt weighting

|

||||||

|

- 6GB or even 4GB GPU support!

|

||||||

|

- [Full feature list here](https://github.com/sd-webui/stable-diffusion-webui/blob/master/README.md)

|

||||||

|

|

||||||

```

|

Screenshots:

|

||||||

models/

|

|

||||||

├── model.ckpt

|

|

||||||

├── GFPGANv1.3.pth

|

|

||||||

├── RealESRGAN_x4plus.pth

|

|

||||||

├── RealESRGAN_x4plus_anime_6B.pth

|

|

||||||

├── LDSR.ckpt

|

|

||||||

└── LDSR.yaml

|

|

||||||

```

|

|

||||||

|

|

||||||

## Run

|

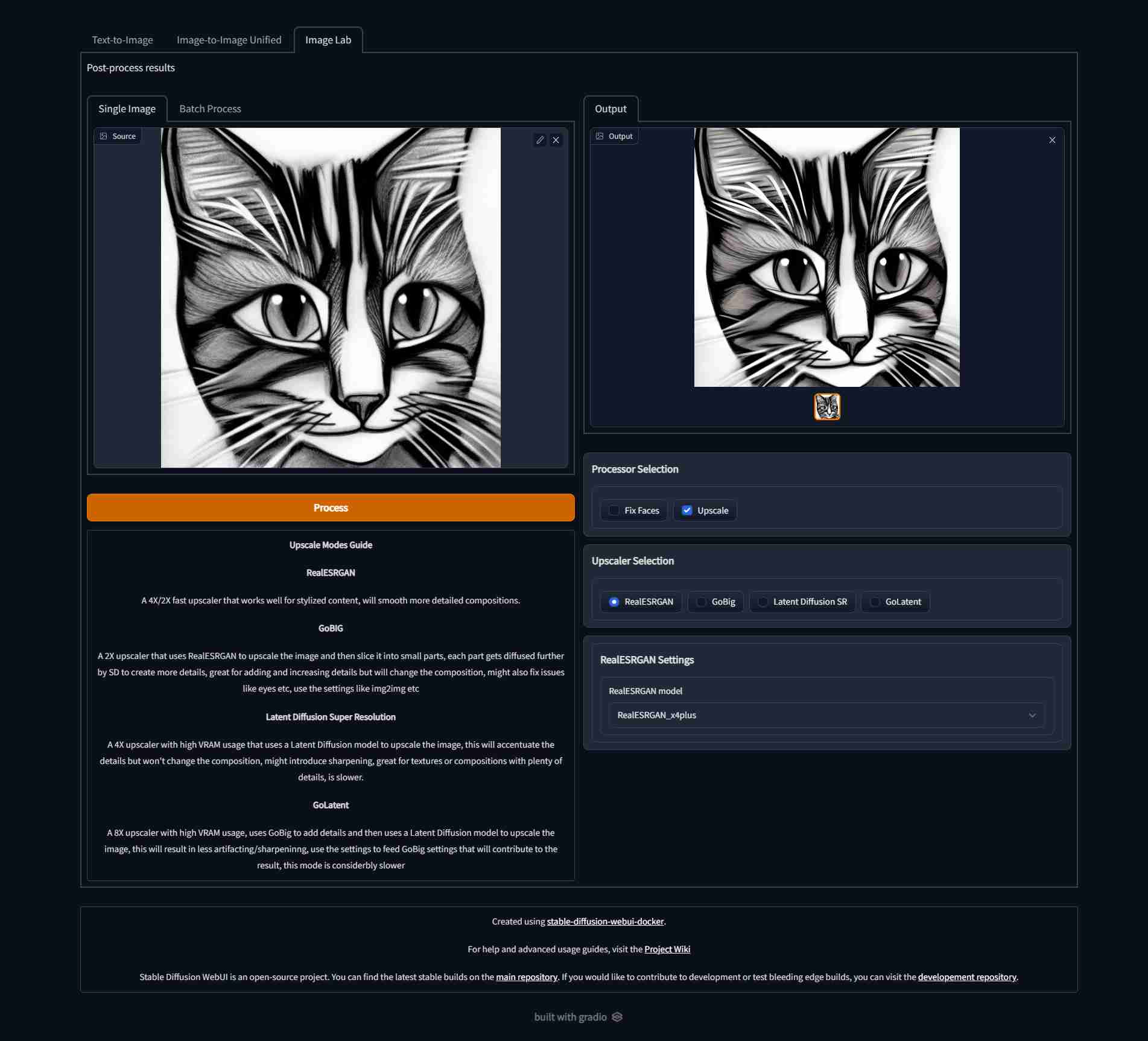

| Text to image | Image to image | Image Lab |

|

||||||

|

| ---------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------- |

|

||||||

|

|  |  |  |

|

||||||

|

|

||||||

After the build is done, you can run the app with:

|

### lstein

|

||||||

|

|

||||||

```

|

[lstein's fork](https://github.com/lstein/stable-diffusion) is very mature when it comes to the cli, and the WebUI has potential.

|

||||||

docker compose up --build

|

|

||||||

```

|

|

||||||

|

|

||||||

Will start the app on http://localhost:7860/

|

| Text to image | Image to image | Extras |

|

||||||

|

| ---------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------- |

|

||||||

|

|  |  |  |

|

||||||

|

|

||||||

Note: the first start will take sometime as some other models will be downloaded, these will be cached in the `cache` folder, so next runs are faster.

|

## Setup & Usage

|

||||||

|

|

||||||

### FAQ

|

Visit the wiki for [Setup](https://github.com/AbdBarho/stable-diffusion-webui-docker/wiki/Setup) and [Usage](https://github.com/AbdBarho/stable-diffusion-webui-docker/wiki/Usage) instructions, checkout the [FAQ](https://github.com/AbdBarho/stable-diffusion-webui-docker/wiki/FAQ) page if you face any problems, or create a new issue!

|

||||||

|

|

||||||

You can find fixes to common issues [in the wiki page.](https://github.com/AbdBarho/stable-diffusion-webui-docker/wiki/FAQ)

|

## Contributing

|

||||||

|

|

||||||

## Config

|

Contributions are welcome! create an issue first of what you want to contribute (before you implement anything) so we can talk about it.

|

||||||

|

|

||||||

in the `docker-compose.yml` you can change the `CLI_ARGS` variable, which contains the arguments that will be passed to the WebUI. By default: `--extra-models-cpu --optimized-turbo` are given, which allow you to use this model on a 6GB GPU. However, some features might not be available in the mode. [You can find the full list of arguments here.](https://github.com/hlky/stable-diffusion-webui/blob/2b1ac8daf7ea82c6c56eabab7e80ec1c33106a98/scripts/webui.py)

|

## Disclaimer

|

||||||

|

|

||||||

You can set the `WEBUI_SHA` to [any SHA from the main repo](https://github.com/hlky/stable-diffusion/commits/main), this will build the container against that commit. Use at your own risk.

|

|

||||||

|

|

||||||

# Disclaimer

|

|

||||||

|

|

||||||

The authors of this project are not responsible for any content generated using this interface.

|

The authors of this project are not responsible for any content generated using this interface.

|

||||||

|

|

||||||

This license of this software forbids you from sharing any content that violates any laws, produce any harm to a person, disseminate any personal information that would be meant for harm, spread misinformation and target vulnerable groups. For the full list of restrictions please read [the license](./LICENSE).

|

This license of this software forbids you from sharing any content that violates any laws, produce any harm to a person, disseminate any personal information that would be meant for harm, spread misinformation and target vulnerable groups. For the full list of restrictions please read [the license](./LICENSE).

|

||||||

|

|

||||||

# Thanks

|

## Thanks

|

||||||

|

|

||||||

Special thanks to everyone behind these awesome projects, without them, none of this would have been possible:

|

Special thanks to everyone behind these awesome projects, without them, none of this would have been possible:

|

||||||

|

|

||||||

@@ -89,3 +71,4 @@ Special thanks to everyone behind these awesome projects, without them, none of

|

|||||||

- [CompVis/stable-diffusion](https://github.com/CompVis/stable-diffusion)

|

- [CompVis/stable-diffusion](https://github.com/CompVis/stable-diffusion)

|

||||||

- [hlky/sd-enable-textual-inversion](https://github.com/hlky/sd-enable-textual-inversion)

|

- [hlky/sd-enable-textual-inversion](https://github.com/hlky/sd-enable-textual-inversion)

|

||||||

- [devilismyfriend/latent-diffusion](https://github.com/devilismyfriend/latent-diffusion)

|

- [devilismyfriend/latent-diffusion](https://github.com/devilismyfriend/latent-diffusion)

|

||||||

|

- [Hafiidz/latent-diffusion](https://github.com/Hafiidz/latent-diffusion)

|

||||||

|

|||||||

4

cache/.gitignore

vendored

4

cache/.gitignore

vendored

@@ -1,3 +1,5 @@

|

|||||||

/torch

|

/torch

|

||||||

/transformers

|

/transformers

|

||||||

/weights

|

/weights

|

||||||

|

/models

|

||||||

|

/custom-models

|

||||||

|

|||||||

@@ -1,21 +1,11 @@

|

|||||||

version: '3.9'

|

version: '3.9'

|

||||||

|

|

||||||

services:

|

x-base_service: &base_service

|

||||||

model:

|

|

||||||

build:

|

|

||||||

context: ./hlky/

|

|

||||||

args:

|

|

||||||

# You can choose any commit sha from https://github.com/hlky/stable-diffusion/commits/main

|

|

||||||

# USE AT YOUR OWN RISK! otherwise just leave it empty.

|

|

||||||

WEBUI_SHA:

|

|

||||||

ports:

|

ports:

|

||||||

- "7860:7860"

|

- "7860:7860"

|

||||||

volumes:

|

volumes:

|

||||||

- ./cache:/cache

|

- &v1 ./cache:/cache

|

||||||

- ./output:/output

|

- &v2 ./output:/output

|

||||||

- ./models:/models

|

|

||||||

environment:

|

|

||||||

- CLI_ARGS=--extra-models-cpu --optimized-turbo

|

|

||||||

deploy:

|

deploy:

|

||||||

resources:

|

resources:

|

||||||

reservations:

|

reservations:

|

||||||

@@ -23,3 +13,45 @@ services:

|

|||||||

- driver: nvidia

|

- driver: nvidia

|

||||||

device_ids: ['0']

|

device_ids: ['0']

|

||||||

capabilities: [gpu]

|

capabilities: [gpu]

|

||||||

|

|

||||||

|

name: webui-docker

|

||||||

|

|

||||||

|

services:

|

||||||

|

download:

|

||||||

|

build: ./services/download/

|

||||||

|

profiles: ["download"]

|

||||||

|

volumes:

|

||||||

|

- *v1

|

||||||

|

|

||||||

|

hlky:

|

||||||

|

<<: *base_service

|

||||||

|

profiles: ["hlky"]

|

||||||

|

build: ./services/hlky/

|

||||||

|

environment:

|

||||||

|

- CLI_ARGS=--optimized-turbo

|

||||||

|

|

||||||

|

automatic1111: &automatic

|

||||||

|

<<: *base_service

|

||||||

|

profiles: ["auto"]

|

||||||

|

build: ./services/AUTOMATIC1111

|

||||||

|

volumes:

|

||||||

|

- *v1

|

||||||

|

- *v2

|

||||||

|

- ./services/AUTOMATIC1111/config.json:/stable-diffusion-webui/config.json

|

||||||

|

environment:

|

||||||

|

- CLI_ARGS=--medvram --opt-split-attention

|

||||||

|

|

||||||

|

automatic1111-cpu:

|

||||||

|

<<: *automatic

|

||||||

|

profiles: ["auto-cpu"]

|

||||||

|

deploy: {}

|

||||||

|

environment:

|

||||||

|

- CLI_ARGS=--no-half --precision full

|

||||||

|

|

||||||

|

lstein:

|

||||||

|

<<: *base_service

|

||||||

|

profiles: ["lstein"]

|

||||||

|

build: ./services/lstein/

|

||||||

|

environment:

|

||||||

|

- PRELOAD=false

|

||||||

|

- CLI_ARGS=

|

||||||

|

|||||||

@@ -1,30 +0,0 @@

|

|||||||

#!/bin/bash

|

|

||||||

|

|

||||||

set -e

|

|

||||||

|

|

||||||

declare -A MODELS

|

|

||||||

MODELS["/stable-diffusion/src/gfpgan/experiments/pretrained_models/GFPGANv1.3.pth"]=GFPGANv1.3.pth

|

|

||||||

MODELS["/stable-diffusion/src/realesrgan/experiments/pretrained_models/RealESRGAN_x4plus.pth"]=RealESRGAN_x4plus.pth

|

|

||||||

MODELS["/stable-diffusion/src/realesrgan/experiments/pretrained_models/RealESRGAN_x4plus_anime_6B.pth"]=RealESRGAN_x4plus_anime_6B.pth

|

|

||||||

MODELS["/latent-diffusion/experiments/pretrained_models/model.ckpt"]=LDSR.ckpt

|

|

||||||

# MODELS["/latent-diffusion/experiments/pretrained_models/project.yaml"]=LDSR.yaml

|

|

||||||

|

|

||||||

for path in "${!MODELS[@]}"; do

|

|

||||||

name=${MODELS[$path]}

|

|

||||||

base=$(dirname "${path}")

|

|

||||||

from_path="/models/${name}"

|

|

||||||

if test -f "${from_path}"; then

|

|

||||||

mkdir -p "${base}" && ln -sf "${from_path}" "${path}" && echo "Mounted ${name}"

|

|

||||||

else

|

|

||||||

echo "Skipping ${name}"

|

|

||||||

fi

|

|

||||||

done

|

|

||||||

|

|

||||||

# hack for latent-diffusion

|

|

||||||

if test -f /models/LDSR.yaml; then

|

|

||||||

sed 's/ldm\./ldm_latent\./g' /models/LDSR.yaml >/latent-diffusion/experiments/pretrained_models/project.yaml

|

|

||||||

fi

|

|

||||||

|

|

||||||

# force facexlib cache

|

|

||||||

mkdir -p /cache/weights/ /stable-diffusion/gfpgan/

|

|

||||||

ln -sf /cache/weights/ /stable-diffusion/gfpgan/

|

|

||||||

@@ -1,29 +0,0 @@

|

|||||||

# syntax=docker/dockerfile:1

|

|

||||||

|

|

||||||

FROM continuumio/miniconda3:4.12.0

|

|

||||||

|

|

||||||

SHELL ["/bin/bash", "-ceuxo", "pipefail"]

|

|

||||||

|

|

||||||

RUN conda install python=3.8.5 && conda clean -a -y

|

|

||||||

RUN conda install pytorch==1.11.0 torchvision==0.12.0 cudatoolkit=11.3 -c pytorch && conda clean -a -y

|

|

||||||

|

|

||||||

RUN apt-get update && apt install fonts-dejavu-core rsync -y && apt-get clean

|

|

||||||

|

|

||||||

|

|

||||||

RUN <<EOF

|

|

||||||

git clone https://github.com/lstein/stable-diffusion.git

|

|

||||||

cd stable-diffusion

|

|

||||||

git reset --hard 751283a2de81bee4bb571fbabe4adb19f1d85b97

|

|

||||||

conda env update --file environment.yaml -n base

|

|

||||||

conda clean -a -y

|

|

||||||

EOF

|

|

||||||

|

|

||||||

ENV TRANSFORMERS_CACHE=/cache/transformers TORCH_HOME=/cache/torch CLI_ARGS=""

|

|

||||||

|

|

||||||

WORKDIR /stable-diffusion

|

|

||||||

|

|

||||||

EXPOSE 7860

|

|

||||||

# run, -u to not buffer stdout / stderr

|

|

||||||

CMD mkdir -p /stable-diffusion/models/ldm/stable-diffusion-v1/ && \

|

|

||||||

ln -sf /models/model.ckpt /stable-diffusion/models/ldm/stable-diffusion-v1/model.ckpt && \

|

|

||||||

python3 -u scripts/dream.py --outdir /output --web --host 0.0.0.0 --port 7860 ${CLI_ARGS}

|

|

||||||

@@ -1,14 +0,0 @@

|

|||||||

# WebUI for lstein

|

|

||||||

|

|

||||||

The WebUI of [lstein/stable-diffusion](https://github.com/lstein/stable-diffusion) as docker container!

|

|

||||||

|

|

||||||

Although it is a simple UI, the project has a lot of potential.

|

|

||||||

|

|

||||||

## Setup

|

|

||||||

|

|

||||||

Clone this repo, download the `model.ckpt` and put into the `models` folder as mentioned in [the main README](../README.md), then run

|

|

||||||

|

|

||||||

```

|

|

||||||

cd lstein

|

|

||||||

docker compose up --build

|

|

||||||

```

|

|

||||||

@@ -1,20 +0,0 @@

|

|||||||

version: '3.9'

|

|

||||||

|

|

||||||

services:

|

|

||||||

model:

|

|

||||||

build: .

|

|

||||||

ports:

|

|

||||||

- "7860:7860"

|

|

||||||

volumes:

|

|

||||||

- ../cache:/cache

|

|

||||||

- ../output:/output

|

|

||||||

- ../models:/models

|

|

||||||

environment:

|

|

||||||

- CLI_ARGS=

|

|

||||||

deploy:

|

|

||||||

resources:

|

|

||||||

reservations:

|

|

||||||

devices:

|

|

||||||

- driver: nvidia

|

|

||||||

device_ids: ['0']

|

|

||||||

capabilities: [gpu]

|

|

||||||

7

models/.gitignore

vendored

7

models/.gitignore

vendored

@@ -1,7 +0,0 @@

|

|||||||

/model.ckpt

|

|

||||||

/GFPGANv1.3.pth

|

|

||||||

/RealESRGAN_x2plus.pth

|

|

||||||

/RealESRGAN_x4plus.pth

|

|

||||||

/RealESRGAN_x4plus_anime_6B.pth

|

|

||||||

/LDSR.ckpt

|

|

||||||

/LDSR.yaml

|

|

||||||

5

scripts/chmod.sh

Executable file

5

scripts/chmod.sh

Executable file

@@ -0,0 +1,5 @@

|

|||||||

|

#!/bin/bash

|

||||||

|

|

||||||

|

set -Eeuo pipefail

|

||||||

|

|

||||||

|

find . -name "*.sh" -exec git update-index --chmod=+x {} \;

|

||||||

@@ -2,52 +2,64 @@

|

|||||||

|

|

||||||

FROM alpine/git:2.36.2 as download

|

FROM alpine/git:2.36.2 as download

|

||||||

RUN <<EOF

|

RUN <<EOF

|

||||||

# who knows

|

# because taming-transformers is huge

|

||||||

git config --global http.postBuffer 1048576000

|

git config --global http.postBuffer 1048576000

|

||||||

git clone https://github.com/sczhou/CodeFormer.git repositories/CodeFormer

|

git clone https://github.com/sczhou/CodeFormer.git repositories/CodeFormer

|

||||||

git clone https://github.com/CompVis/stable-diffusion.git repositories/stable-diffusion

|

git clone https://github.com/CompVis/stable-diffusion.git repositories/stable-diffusion

|

||||||

|

git clone https://github.com/salesforce/BLIP.git repositories/BLIP

|

||||||

git clone https://github.com/CompVis/taming-transformers.git repositories/taming-transformers

|

git clone https://github.com/CompVis/taming-transformers.git repositories/taming-transformers

|

||||||

rm -rf repositories/taming-transformers/data repositories/taming-transformers/assets

|

rm -rf repositories/taming-transformers/data repositories/taming-transformers/assets

|

||||||

EOF

|

EOF

|

||||||

|

|

||||||

FROM pytorch/pytorch:1.12.1-cuda11.3-cudnn8-runtime

|

|

||||||

|

FROM continuumio/miniconda3:4.12.0

|

||||||

|

|

||||||

SHELL ["/bin/bash", "-ceuxo", "pipefail"]

|

SHELL ["/bin/bash", "-ceuxo", "pipefail"]

|

||||||

|

|

||||||

ENV DEBIAN_FRONTEND=noninteractive

|

ENV DEBIAN_FRONTEND=noninteractive

|

||||||

RUN apt-get update && apt-get install git fonts-dejavu-core -y && apt-get clean

|

|

||||||

|

RUN conda install python=3.8.5 && conda clean -a -y

|

||||||

|

RUN conda install pytorch==1.11.0 torchvision==0.12.0 cudatoolkit=11.3 -c pytorch && conda clean -a -y

|

||||||

|

|

||||||

|

RUN apt-get update && apt install fonts-dejavu-core rsync -y && apt-get clean

|

||||||

|

|

||||||

|

|

||||||

RUN <<EOF

|

RUN <<EOF

|

||||||

git clone https://github.com/AUTOMATIC1111/stable-diffusion-webui.git

|

git clone https://github.com/AUTOMATIC1111/stable-diffusion-webui.git

|

||||||

cd stable-diffusion-webui

|

cd stable-diffusion-webui

|

||||||

git reset --hard db6db585eb9ee48e7315e28603e18531dbc87067

|

git reset --hard 13eec4f3d4081fdc43883c5ef02e471a2b6c7212

|

||||||

pip install -U --prefer-binary --no-cache-dir -r requirements.txt

|

conda env update --file environment-wsl2.yaml -n base

|

||||||

|

conda clean -a -y

|

||||||

|

pip install --prefer-binary --no-cache-dir -r requirements.txt

|

||||||

EOF

|

EOF

|

||||||

|

|

||||||

ENV ROOT=/workspace/stable-diffusion-webui \

|

ENV ROOT=/stable-diffusion-webui \

|

||||||

WORKDIR=/workspace/stable-diffusion-webui/repositories/stable-diffusion

|

WORKDIR=/stable-diffusion-webui/repositories/stable-diffusion

|

||||||

|

|

||||||

COPY --from=download /git/ ${ROOT}

|

COPY --from=download /git/ ${ROOT}

|

||||||

RUN pip install --prefer-binary -U --no-cache-dir -r ${ROOT}/repositories/CodeFormer/requirements.txt

|

RUN pip install --prefer-binary --no-cache-dir -r ${ROOT}/repositories/CodeFormer/requirements.txt

|

||||||

|

|

||||||

# Note: don't update the sha of previous versions because the install will take forever

|

# Note: don't update the sha of previous versions because the install will take forever

|

||||||

# instead, update the repo state in a later step

|

# instead, update the repo state in a later step

|

||||||

ARG SHA=701f76b29ab8fa9c1d35ae8abce36b99e12d5d08

|

|

||||||

|

ARG SHA=99585b3514e2d7e987651d5c6a0806f933af012b

|

||||||

RUN <<EOF

|

RUN <<EOF

|

||||||

cd stable-diffusion-webui

|

cd stable-diffusion-webui

|

||||||

git pull

|

git pull --rebase

|

||||||

git reset --hard ${SHA}

|

git reset --hard ${SHA}

|

||||||

pip install --prefer-binary --no-cache-dir -r requirements.txt

|

pip install --prefer-binary --no-cache-dir -r requirements.txt

|

||||||

EOF

|

EOF

|

||||||

|

|

||||||

RUN pip install --prefer-binary -U --no-cache-dir opencv-python-headless markupsafe==2.0.1

|

RUN pip install --prefer-binary -U --no-cache-dir opencv-python-headless

|

||||||

|

|

||||||

ENV TRANSFORMERS_CACHE=/cache/transformers TORCH_HOME=/cache/torch CLI_ARGS=""

|

ENV TRANSFORMERS_CACHE=/cache/transformers TORCH_HOME=/cache/torch CLI_ARGS=""

|

||||||

|

|

||||||

COPY . /docker

|

COPY . /docker

|

||||||

RUN chmod +x /docker/mount.sh && python3 /docker/info.py ${ROOT}/modules/ui.py

|

RUN chmod +x /docker/mount.sh && python3 /docker/info.py ${ROOT}/modules/ui.py

|

||||||

|

|

||||||

|

|

||||||

WORKDIR ${WORKDIR}

|

WORKDIR ${WORKDIR}

|

||||||

EXPOSE 7860

|

EXPOSE 7860

|

||||||

# run, -u to not buffer stdout / stderr

|

# run, -u to not buffer stdout / stderr

|

||||||

CMD /docker/mount.sh && python3 -u ../../webui.py --listen --port 7860 ${CLI_ARGS}

|

CMD /docker/mount.sh && \

|

||||||

|

python3 -u ../../webui.py --listen --port 7860 --hide-ui-dir-config --ckpt-dir /cache/custom-models --ckpt /cache/models/model.ckpt ${CLI_ARGS}

|

||||||

58

services/AUTOMATIC1111/config.json

Normal file

58

services/AUTOMATIC1111/config.json

Normal file

@@ -0,0 +1,58 @@

|

|||||||

|

{

|

||||||

|

"outdir_samples": "/output",

|

||||||

|

"outdir_txt2img_samples": "/output/txt2img-images",

|

||||||

|

"outdir_img2img_samples": "/output/img2img-images",

|

||||||

|

"outdir_extras_samples": "/output/extras-images",

|

||||||

|

"outdir_txt2img_grids": "/output/txt2img-grids",

|

||||||

|

"outdir_img2img_grids": "/output/img2img-grids",

|

||||||

|

"outdir_save": "/output/saved",

|

||||||

|

"font": "DejaVuSans.ttf",

|

||||||

|

"__WARNING__": "DON'T CHANGE ANYTHING BEFORE THIS",

|

||||||

|

|

||||||

|

"samples_filename_format": "",

|

||||||

|

"outdir_grids": "",

|

||||||

|

"save_to_dirs": false,

|

||||||

|

"grid_save_to_dirs": false,

|

||||||

|

"save_to_dirs_prompt_len": 10,

|

||||||

|

"samples_save": true,

|

||||||

|

"samples_format": "png",

|

||||||

|

"grid_save": true,

|

||||||

|

"return_grid": true,

|

||||||

|

"grid_format": "png",

|

||||||

|

"grid_extended_filename": false,

|

||||||

|

"grid_only_if_multiple": true,

|

||||||

|

"n_rows": -1,

|

||||||

|

"jpeg_quality": 80,

|

||||||

|

"export_for_4chan": true,

|

||||||

|

"enable_pnginfo": true,

|

||||||

|

"add_model_hash_to_info": false,

|

||||||

|

"enable_emphasis": true,

|

||||||

|

"save_txt": false,

|

||||||

|

"ESRGAN_tile": 192,

|

||||||

|

"ESRGAN_tile_overlap": 8,

|

||||||

|

"random_artist_categories": [],

|

||||||

|

"upscale_at_full_resolution_padding": 16,

|

||||||

|

"show_progressbar": true,

|

||||||

|

"show_progress_every_n_steps": 7,

|

||||||

|

"multiple_tqdm": true,

|

||||||

|

"face_restoration_model": null,

|

||||||

|

"code_former_weight": 0.5,

|

||||||

|

"save_images_before_face_restoration": false,

|

||||||

|

"face_restoration_unload": false,

|

||||||

|

"interrogate_keep_models_in_memory": false,

|

||||||

|

"interrogate_use_builtin_artists": true,

|

||||||

|

"interrogate_clip_num_beams": 1,

|

||||||

|

"interrogate_clip_min_length": 24,

|

||||||

|

"interrogate_clip_max_length": 48,

|

||||||

|

"interrogate_clip_dict_limit": 1500.0,

|

||||||

|

"samples_filename_pattern": "",

|

||||||

|

"directories_filename_pattern": "",

|

||||||

|

"save_selected_only": false,

|

||||||

|

"filter_nsfw": false,

|

||||||

|

"img2img_color_correction": false,

|

||||||

|

"img2img_fix_steps": false,

|

||||||

|

"enable_quantization": false,

|

||||||

|

"enable_batch_seeds": true,

|

||||||

|

"memmon_poll_rate": 8,

|

||||||

|

"sd_model_checkpoint": null

|

||||||

|

}

|

||||||

@@ -7,7 +7,7 @@ file.write_text(

|

|||||||

.replace(' return demo', """

|

.replace(' return demo', """

|

||||||

with demo:

|

with demo:

|

||||||

gr.Markdown(

|

gr.Markdown(

|

||||||

'Created by [AUTOMATIC1111 / stable-diffusion-webui-docker](https://github.com/AbdBarho/stable-diffusion-webui-docker/tree/master/AUTOMATIC1111)'

|

'Created by [AUTOMATIC1111 / stable-diffusion-webui-docker](https://github.com/AbdBarho/stable-diffusion-webui-docker/)'

|

||||||

)

|

)

|

||||||

return demo

|

return demo

|

||||||

""", 1)

|

""", 1)

|

||||||

@@ -1,14 +1,18 @@

|

|||||||

#!/bin/bash

|

#!/bin/bash

|

||||||

|

|

||||||

|

set -e

|

||||||

|

|

||||||

declare -A MODELS

|

declare -A MODELS

|

||||||

|

|

||||||

MODELS["${WORKDIR}/models/ldm/stable-diffusion-v1/model.ckpt"]=model.ckpt

|

MODELS["${WORKDIR}/models/ldm/stable-diffusion-v1/model.ckpt"]=model.ckpt

|

||||||

MODELS["${ROOT}/GFPGANv1.3.pth"]=GFPGANv1.3.pth

|

MODELS["${ROOT}/GFPGANv1.3.pth"]=GFPGANv1.3.pth

|

||||||

|

|

||||||

|

MODELS_DIR=/cache/models

|

||||||

|

|

||||||

for path in "${!MODELS[@]}"; do

|

for path in "${!MODELS[@]}"; do

|

||||||

name=${MODELS[$path]}

|

name=${MODELS[$path]}

|

||||||

base=$(dirname "${path}")

|

base=$(dirname "${path}")

|

||||||

from_path="/models/${name}"

|

from_path="${MODELS_DIR}/${name}"

|

||||||

if test -f "${from_path}"; then

|

if test -f "${from_path}"; then

|

||||||

mkdir -p "${base}" && ln -sf "${from_path}" "${path}" && echo "Mounted ${name}"

|

mkdir -p "${base}" && ln -sf "${from_path}" "${path}" && echo "Mounted ${name}"

|

||||||

else

|

else

|

||||||

@@ -17,8 +21,8 @@ for path in "${!MODELS[@]}"; do

|

|||||||

done

|

done

|

||||||

|

|

||||||

# force realesrgan cache

|

# force realesrgan cache

|

||||||

rm -rf /opt/conda/lib/python3.7/site-packages/realesrgan/weights

|

rm -rf /opt/conda/lib/python3.8/site-packages/realesrgan/weights

|

||||||

ln -s -T /models /opt/conda/lib/python3.7/site-packages/realesrgan/weights

|

ln -s -T "${MODELS_DIR}" /opt/conda/lib/python3.8/site-packages/realesrgan/weights

|

||||||

|

|

||||||

# force facexlib cache

|

# force facexlib cache

|

||||||

mkdir -p /cache/weights/ ${WORKDIR}/gfpgan/

|

mkdir -p /cache/weights/ ${WORKDIR}/gfpgan/

|

||||||

@@ -28,5 +32,4 @@ rm -rf ${ROOT}/repositories/CodeFormer/weights/CodeFormer ${ROOT}/repositories/C

|

|||||||

ln -sf -T /cache/weights ${ROOT}/repositories/CodeFormer/weights/CodeFormer

|

ln -sf -T /cache/weights ${ROOT}/repositories/CodeFormer/weights/CodeFormer

|

||||||

ln -sf -T /cache/weights ${ROOT}/repositories/CodeFormer/weights/facelib

|

ln -sf -T /cache/weights ${ROOT}/repositories/CodeFormer/weights/facelib

|

||||||

|

|

||||||

# mount config

|

mkdir -p /cache/torch /cache/transformers /cache/weights /cache/models /cache/custom-models

|

||||||

ln -sf /docker/config.json ${WORKDIR}/config.json

|

|

||||||

6

services/download/Dockerfile

Normal file

6

services/download/Dockerfile

Normal file

@@ -0,0 +1,6 @@

|

|||||||

|

FROM bash:alpine3.15

|

||||||

|

|

||||||

|

RUN apk add parallel aria2

|

||||||

|

COPY . /docker

|

||||||

|

RUN chmod +x /docker/download.sh

|

||||||

|

ENTRYPOINT ["/docker/download.sh"]

|

||||||

6

services/download/checksums.sha256

Normal file

6

services/download/checksums.sha256

Normal file

@@ -0,0 +1,6 @@

|

|||||||

|

fe4efff1e174c627256e44ec2991ba279b3816e364b49f9be2abc0b3ff3f8556 /cache/models/model.ckpt

|

||||||

|

c953a88f2727c85c3d9ae72e2bd4846bbaf59fe6972ad94130e23e7017524a70 /cache/models/GFPGANv1.3.pth

|

||||||

|

4fa0d38905f75ac06eb49a7951b426670021be3018265fd191d2125df9d682f1 /cache/models/RealESRGAN_x4plus.pth

|

||||||

|

f872d837d3c90ed2e05227bed711af5671a6fd1c9f7d7e91c911a61f155e99da /cache/models/RealESRGAN_x4plus_anime_6B.pth

|

||||||

|

c209caecac2f97b4bb8f4d726b70ac2ac9b35904b7fc99801e1f5e61f9210c13 /cache/models/LDSR.ckpt

|

||||||

|

9d6ad53c5dafeb07200fb712db14b813b527edd262bc80ea136777bdb41be2ba /cache/models/LDSR.yaml

|

||||||

13

services/download/download.sh

Executable file

13

services/download/download.sh

Executable file

@@ -0,0 +1,13 @@

|

|||||||

|

#!/usr/bin/env bash

|

||||||

|

|

||||||

|

set -Eeuo pipefail

|

||||||

|

|

||||||

|

mkdir -p /cache/torch /cache/transformers /cache/weights /cache/models /cache/custom-models

|

||||||

|

|

||||||

|

echo "Downloading, this might take a while..."

|

||||||

|

|

||||||

|

aria2c --input-file /docker/links.txt --dir /cache/models --continue

|

||||||

|

|

||||||

|

echo "Checking SHAs..."

|

||||||

|

|

||||||

|

parallel --will-cite -a /docker/checksums.sha256 "echo -n {} | sha256sum -c"

|

||||||

12

services/download/links.txt

Normal file

12

services/download/links.txt

Normal file

@@ -0,0 +1,12 @@

|

|||||||

|

https://www.googleapis.com/storage/v1/b/aai-blog-files/o/sd-v1-4.ckpt?alt=media

|

||||||

|

out=model.ckpt

|

||||||

|

https://github.com/TencentARC/GFPGAN/releases/download/v1.3.0/GFPGANv1.3.pth

|

||||||

|

out=GFPGANv1.3.pth

|

||||||

|

https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth

|

||||||

|

out=RealESRGAN_x4plus.pth

|

||||||

|

https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth

|

||||||

|

out=RealESRGAN_x4plus_anime_6B.pth

|

||||||

|

https://heibox.uni-heidelberg.de/f/31a76b13ea27482981b4/?dl=1

|

||||||

|

out=LDSR.yaml

|

||||||

|

https://heibox.uni-heidelberg.de/f/578df07c8fc04ffbadf3/?dl=1

|

||||||

|

out=LDSR.ckpt

|

||||||

@@ -4,16 +4,19 @@ FROM continuumio/miniconda3:4.12.0

|

|||||||

|

|

||||||

SHELL ["/bin/bash", "-ceuxo", "pipefail"]

|

SHELL ["/bin/bash", "-ceuxo", "pipefail"]

|

||||||

|

|

||||||

|

ENV DEBIAN_FRONTEND=noninteractive

|

||||||

|

|

||||||

RUN conda install python=3.8.5 && conda clean -a -y

|

RUN conda install python=3.8.5 && conda clean -a -y

|

||||||

RUN conda install pytorch==1.11.0 torchvision==0.12.0 cudatoolkit=11.3 -c pytorch && conda clean -a -y

|

RUN conda install pytorch==1.11.0 torchvision==0.12.0 cudatoolkit=11.3 -c pytorch && conda clean -a -y

|

||||||

|

|

||||||

RUN apt-get update && apt install fonts-dejavu-core rsync -y && apt-get clean

|

RUN apt-get update && apt install fonts-dejavu-core rsync gcc -y && apt-get clean

|

||||||

|

|

||||||

|

|

||||||

RUN <<EOF

|

RUN <<EOF

|

||||||

|

git config --global http.postBuffer 1048576000

|

||||||

git clone https://github.com/sd-webui/stable-diffusion-webui.git stable-diffusion

|

git clone https://github.com/sd-webui/stable-diffusion-webui.git stable-diffusion

|

||||||

cd stable-diffusion

|

cd stable-diffusion

|

||||||

git reset --hard 2b1ac8daf7ea82c6c56eabab7e80ec1c33106a98

|

git reset --hard 7623a5734740025d79b710f3744bff9276e1467b

|

||||||

conda env update --file environment.yaml -n base

|

conda env update --file environment.yaml -n base

|

||||||

conda clean -a -y

|

conda clean -a -y

|

||||||

EOF

|

EOF

|

||||||

@@ -23,23 +26,24 @@ RUN pip install -U --no-cache-dir pyperclip

|

|||||||

|

|

||||||

# Note: don't update the sha of previous versions because the install will take forever

|

# Note: don't update the sha of previous versions because the install will take forever

|

||||||

# instead, update the repo state in a later step

|

# instead, update the repo state in a later step

|

||||||

ARG WEBUI_SHA=0dffc3918d596ad36a32ac56ecf4d523f490ae5e

|

ARG BRANCH=master

|

||||||

RUN cd stable-diffusion && git pull && git reset --hard ${WEBUI_SHA} && \

|

ARG SHA=833a91047df999302f699637768741cecee9c37b

|

||||||

conda env update --file environment.yaml --name base && conda clean -a -y

|

# ARG BRANCH=dev

|

||||||

|

# ARG SHA=5f3d7facdea58fc4f89b8c584d22a4639615a2f8

|

||||||

# Textual inversion

|

|

||||||

RUN <<EOF

|

RUN <<EOF

|

||||||

git clone https://github.com/hlky/sd-enable-textual-inversion.git &&

|

cd stable-diffusion

|

||||||

cd /sd-enable-textual-inversion && git reset --hard 08f9b5046552d17cf7327b30a98410222741b070 &&

|

git fetch

|

||||||

rsync -a /sd-enable-textual-inversion/ /stable-diffusion/ &&

|

git checkout ${BRANCH}

|

||||||

rm -rf /sd-enable-textual-inversion

|

git reset --hard ${SHA}

|

||||||

|

conda env update --file environment.yaml -n base

|

||||||

|

conda clean -a -y

|

||||||

EOF

|

EOF

|

||||||

|

|

||||||

# Latent diffusion

|

# Latent diffusion

|

||||||

RUN <<EOF

|

RUN <<EOF

|

||||||

git clone https://github.com/devilismyfriend/latent-diffusion &&

|

git clone https://github.com/Hafiidz/latent-diffusion.git

|

||||||

cd /latent-diffusion &&

|

cd latent-diffusion

|

||||||

git reset --hard 6d61fc03f15273a457950f2cdc10dddf53ba6809 &&

|

git reset --hard e1a84a89fcbb49881546cf2acf1e7e250923dba0

|

||||||

# hacks all the way down

|

# hacks all the way down

|

||||||

mv ldm ldm_latent &&

|

mv ldm ldm_latent &&

|

||||||

sed -i -- 's/from ldm/from ldm_latent/g' *.py

|

sed -i -- 's/from ldm/from ldm_latent/g' *.py

|

||||||

@@ -55,4 +59,6 @@ WORKDIR /stable-diffusion

|

|||||||

ENV TRANSFORMERS_CACHE=/cache/transformers TORCH_HOME=/cache/torch CLI_ARGS=""

|

ENV TRANSFORMERS_CACHE=/cache/transformers TORCH_HOME=/cache/torch CLI_ARGS=""

|

||||||

EXPOSE 7860

|

EXPOSE 7860

|

||||||

# run, -u to not buffer stdout / stderr

|

# run, -u to not buffer stdout / stderr

|

||||||

CMD /docker/mount.sh && python3 -u scripts/webui.py --outdir /output --ckpt /models/model.ckpt --ldsr-dir /latent-diffusion ${CLI_ARGS}

|

CMD /docker/mount.sh && \

|

||||||

|

python3 -u scripts/webui.py --outdir /output --ckpt /cache/models/model.ckpt --ldsr-dir /latent-diffusion --inbrowser ${CLI_ARGS}

|

||||||

|

# STREAMLIT_SERVER_PORT=7860 python -m streamlit run scripts/webui_streamlit.py

|

||||||

38

services/hlky/mount.sh

Executable file

38

services/hlky/mount.sh

Executable file

@@ -0,0 +1,38 @@

|

|||||||

|

#!/bin/bash

|

||||||

|

|

||||||

|

set -e

|

||||||

|

|

||||||

|

declare -A MODELS

|

||||||

|

|

||||||

|

ROOT=/stable-diffusion/src

|

||||||

|

|

||||||

|

MODELS["${ROOT}/gfpgan/experiments/pretrained_models/GFPGANv1.3.pth"]=GFPGANv1.3.pth

|

||||||

|

MODELS["${ROOT}/realesrgan/experiments/pretrained_models/RealESRGAN_x4plus.pth"]=RealESRGAN_x4plus.pth

|

||||||

|

MODELS["${ROOT}/realesrgan/experiments/pretrained_models/RealESRGAN_x4plus_anime_6B.pth"]=RealESRGAN_x4plus_anime_6B.pth

|

||||||

|

MODELS["/latent-diffusion/experiments/pretrained_models/model.ckpt"]=LDSR.ckpt

|

||||||

|

# MODELS["/latent-diffusion/experiments/pretrained_models/project.yaml"]=LDSR.yaml

|

||||||

|

|

||||||

|

MODELS_DIR=/cache/models

|

||||||

|

|

||||||

|

for path in "${!MODELS[@]}"; do

|

||||||

|

name=${MODELS[$path]}

|

||||||

|

base=$(dirname "${path}")

|

||||||

|

from_path="${MODELS_DIR}/${name}"

|

||||||

|

if test -f "${from_path}"; then

|

||||||

|

mkdir -p "${base}" && ln -sf "${from_path}" "${path}" && echo "Mounted ${name}"

|

||||||

|

else

|

||||||

|

echo "Skipping ${name}"

|

||||||

|

fi

|

||||||

|

done

|

||||||

|

|

||||||

|

# hack for latent-diffusion

|

||||||

|

if test -f "${MODELS_DIR}/LDSR.yaml"; then

|

||||||

|

sed 's/ldm\./ldm_latent\./g' "${MODELS_DIR}/LDSR.yaml" >/latent-diffusion/experiments/pretrained_models/project.yaml

|

||||||

|

fi

|

||||||

|

|

||||||

|

# force facexlib cache

|

||||||

|

mkdir -p /cache/weights/ /stable-diffusion/gfpgan/

|

||||||

|

ln -sf /cache/weights/ /stable-diffusion/gfpgan/

|

||||||

|

|

||||||

|

# streamlit config

|

||||||

|

ln -sf /docker/webui_streamlit.yaml /stable-diffusion/configs/webui/webui_streamlit.yaml

|

||||||

155

services/hlky/webui_streamlit.yaml

Normal file

155

services/hlky/webui_streamlit.yaml

Normal file

@@ -0,0 +1,155 @@

|

|||||||

|

# UI defaults configuration file. It is automatically loaded if located at configs/webui/webui_streamlit.yaml.

|

||||||

|

general:

|

||||||

|

gpu: 0

|

||||||

|

outdir: /outputs

|

||||||

|

|

||||||

|

default_model: "Stable Diffusion v1.4"

|

||||||

|

default_model_config: "configs/stable-diffusion/v1-inference.yaml"

|

||||||

|

default_model_path: "/cache/models/model.ckpt"

|

||||||

|

fp:

|

||||||

|

name:

|

||||||

|

GFPGAN_dir: "./src/gfpgan"

|

||||||

|

RealESRGAN_dir: "./src/realesrgan"

|

||||||

|

RealESRGAN_model: "RealESRGAN_x4plus"

|

||||||

|

outdir_txt2img: /outputs/txt2img-samples

|

||||||

|

outdir_img2img: /outputs/img2img-samples

|

||||||

|

gfpgan_cpu: False

|

||||||

|

esrgan_cpu: False

|

||||||

|

extra_models_cpu: False

|

||||||

|

extra_models_gpu: False

|

||||||

|

save_metadata: True

|

||||||

|

save_format: "png"

|

||||||

|

skip_grid: False

|

||||||

|

skip_save: False

|

||||||

|

grid_format: "jpg:95"

|

||||||

|

n_rows: -1

|

||||||

|

no_verify_input: False

|

||||||

|

no_half: False

|

||||||

|

use_float16: False

|

||||||

|

precision: "autocast"

|

||||||

|

optimized: False

|

||||||

|

optimized_turbo: True

|

||||||

|

optimized_config: "optimizedSD/v1-inference.yaml"

|

||||||

|

update_preview: True

|

||||||

|

update_preview_frequency: 5

|

||||||

|

|

||||||

|

txt2img:

|

||||||

|

prompt:

|

||||||

|

height: 512

|

||||||

|

width: 512

|

||||||

|

cfg_scale: 7.5

|

||||||

|

seed: ""

|

||||||

|

batch_count: 1

|

||||||

|

batch_size: 1

|

||||||

|

sampling_steps: 30

|

||||||

|

default_sampler: "k_euler"

|

||||||

|

separate_prompts: False

|

||||||

|

update_preview: True

|

||||||

|

update_preview_frequency: 5

|

||||||

|

normalize_prompt_weights: True

|

||||||

|

save_individual_images: True

|

||||||

|

save_grid: True

|

||||||

|

group_by_prompt: True

|

||||||

|

save_as_jpg: False

|

||||||

|

use_GFPGAN: False

|

||||||

|

use_RealESRGAN: False

|

||||||

|

RealESRGAN_model: "RealESRGAN_x4plus"

|

||||||

|

variant_amount: 0.0

|

||||||

|

variant_seed: ""

|

||||||

|

write_info_files: True

|

||||||

|

|

||||||

|

txt2vid:

|

||||||

|

default_model: "CompVis/stable-diffusion-v1-4"

|

||||||

|

custom_models_list:

|

||||||

|

[

|

||||||

|

"CompVis/stable-diffusion-v1-4",

|

||||||

|

"naclbit/trinart_stable_diffusion_v2",

|

||||||

|

"hakurei/waifu-diffusion",

|

||||||

|

"osanseviero/BigGAN-deep-128",

|

||||||

|

]

|

||||||

|

prompt:

|

||||||

|

height: 512

|

||||||

|

width: 512

|

||||||

|

cfg_scale: 7.5

|

||||||

|

seed: ""

|

||||||

|

batch_count: 1

|

||||||

|

batch_size: 1

|

||||||

|

sampling_steps: 30

|

||||||

|

num_inference_steps: 200

|

||||||

|

default_sampler: "k_euler"

|

||||||

|

scheduler_name: "klms"

|

||||||

|

separate_prompts: False

|

||||||

|

update_preview: True

|

||||||

|

update_preview_frequency: 5

|

||||||

|

dynamic_preview_frequency: True

|

||||||

|

normalize_prompt_weights: True

|

||||||

|

save_individual_images: True

|

||||||

|

save_video: True

|

||||||

|

group_by_prompt: True

|

||||||

|

write_info_files: True

|

||||||

|

do_loop: False

|

||||||

|

save_as_jpg: False

|

||||||

|

use_GFPGAN: False

|

||||||

|

use_RealESRGAN: False

|

||||||

|

RealESRGAN_model: "RealESRGAN_x4plus"

|

||||||

|

variant_amount: 0.0

|

||||||

|

variant_seed: ""

|

||||||

|

beta_start: 0.00085

|

||||||

|

beta_end: 0.012

|

||||||

|

beta_scheduler_type: "linear"

|

||||||

|

max_frames: 1000

|

||||||

|

|

||||||

|

img2img:

|

||||||

|

prompt:

|

||||||

|

sampling_steps: 30

|

||||||

|

# Adding an int to toggles enables the corresponding feature.

|

||||||

|

# 0: Create prompt matrix (separate multiple prompts using |, and get all combinations of them)

|

||||||

|

# 1: Normalize Prompt Weights (ensure sum of weights add up to 1.0)

|

||||||

|

# 2: Loopback (use images from previous batch when creating next batch)

|

||||||

|

# 3: Random loopback seed

|

||||||

|

# 4: Save individual images

|

||||||

|

# 5: Save grid

|

||||||

|

# 6: Sort samples by prompt

|

||||||

|

# 7: Write sample info files

|

||||||

|

# 8: jpg samples

|

||||||

|

# 9: Fix faces using GFPGAN

|

||||||

|

# 10: Upscale images using Real-ESRGAN

|

||||||

|

sampler_name: "k_euler"

|

||||||

|

denoising_strength: 0.45

|

||||||

|

# 0: Keep masked area

|

||||||

|

# 1: Regenerate only masked area

|

||||||

|

mask_mode: 0

|

||||||

|

mask_restore: False

|

||||||

|

# 0: Just resize

|

||||||

|

# 1: Crop and resize

|

||||||

|

# 2: Resize and fill

|

||||||

|

resize_mode: 0

|

||||||

|

# Leave blank for random seed:

|

||||||

|

seed: ""

|

||||||

|

ddim_eta: 0.0

|

||||||

|

cfg_scale: 7.5

|

||||||

|

batch_count: 1

|

||||||

|

batch_size: 1

|

||||||

|

height: 512

|

||||||

|

width: 512

|

||||||

|

# Textual inversion embeddings file path:

|

||||||

|

fp: ""

|

||||||

|

loopback: True

|

||||||

|

random_seed_loopback: True

|

||||||

|

separate_prompts: False

|

||||||

|

update_preview: True

|

||||||

|

update_preview_frequency: 5

|

||||||

|

normalize_prompt_weights: True

|

||||||

|

save_individual_images: True

|

||||||

|

save_grid: True

|

||||||

|

group_by_prompt: True

|

||||||

|

save_as_jpg: False

|

||||||

|

use_GFPGAN: False

|

||||||

|

use_RealESRGAN: False

|

||||||

|

RealESRGAN_model: "RealESRGAN_x4plus"

|

||||||

|

variant_amount: 0.0

|

||||||

|

variant_seed: ""

|

||||||

|

write_info_files: True

|

||||||

|

|

||||||

|

gfpgan:

|

||||||

|

strength: 100

|

||||||

44

services/lstein/Dockerfile

Normal file

44

services/lstein/Dockerfile

Normal file

@@ -0,0 +1,44 @@

|

|||||||

|

# syntax=docker/dockerfile:1

|

||||||

|

|

||||||

|

FROM continuumio/miniconda3:4.12.0

|

||||||

|

|

||||||

|

SHELL ["/bin/bash", "-ceuxo", "pipefail"]

|

||||||

|

|

||||||

|

ENV DEBIAN_FRONTEND=noninteractive

|

||||||

|

|

||||||

|

RUN conda install python=3.8.5 && conda clean -a -y

|

||||||

|

RUN conda install pytorch==1.11.0 torchvision==0.12.0 cudatoolkit=11.3 -c pytorch && conda clean -a -y

|

||||||

|

|

||||||

|

RUN apt-get update && apt install fonts-dejavu-core rsync gcc -y && apt-get clean

|

||||||

|

|

||||||

|

|

||||||

|

RUN <<EOF

|

||||||

|

git clone https://github.com/lstein/stable-diffusion.git

|

||||||

|

cd stable-diffusion

|

||||||

|

git reset --hard 751283a2de81bee4bb571fbabe4adb19f1d85b97

|

||||||

|

conda env update --file environment.yaml -n base

|

||||||

|

conda clean -a -y

|

||||||

|

EOF

|

||||||

|

|

||||||

|

|

||||||

|

ARG BRANCH=development SHA=45af30f3a4c98b50c755717831c5fff75a3a8b43

|

||||||

|

# ARG BRANCH=main SHA=89da371f4841f7e05da5a1672459d700c3920784

|

||||||

|

RUN <<EOF

|

||||||

|

cd stable-diffusion

|

||||||

|

git fetch

|

||||||

|

git checkout ${BRANCH}

|

||||||

|

git reset --hard ${SHA}

|

||||||

|

conda env update --file environment.yaml -n base

|

||||||

|

conda clean -a -y

|

||||||

|

EOF

|

||||||

|

|

||||||

|

RUN pip uninstall opencv-python -y && pip install --prefer-binary --upgrade --force-reinstall --no-cache-dir opencv-python-headless

|

||||||

|

|

||||||

|

COPY . /docker/

|

||||||

|

RUN python3 /docker/info.py /stable-diffusion/static/dream_web/index.html && chmod +x /docker/mount.sh

|

||||||

|

|

||||||

|

ENV TRANSFORMERS_CACHE=/cache/transformers TORCH_HOME=/cache/torch PRELOAD=false CLI_ARGS=""

|

||||||

|

WORKDIR /stable-diffusion

|

||||||

|

EXPOSE 7860

|

||||||

|

|

||||||

|

CMD /docker/mount.sh && python3 -u scripts/dream.py --outdir /output --web --host 0.0.0.0 --port 7860 ${CLI_ARGS}

|

||||||

10

services/lstein/info.py

Normal file

10

services/lstein/info.py

Normal file

@@ -0,0 +1,10 @@

|

|||||||

|

import sys

|

||||||

|

from pathlib import Path

|

||||||

|

|

||||||

|

file = Path(sys.argv[1])

|

||||||

|

file.write_text(

|

||||||

|

file.read_text()\

|

||||||

|

.replace('GitHub site</a>', """

|

||||||

|

GitHub site</a>, Deployed with <a href="https://github.com/AbdBarho/stable-diffusion-webui-docker/">stable-diffusion-webui-docker</a>

|

||||||

|

""", 1)

|

||||||

|

)

|

||||||

26

services/lstein/mount.sh

Executable file

26

services/lstein/mount.sh

Executable file

@@ -0,0 +1,26 @@

|

|||||||

|

#!/bin/bash

|

||||||

|

|

||||||

|

set -eu

|

||||||

|

|

||||||

|

ROOT=/stable-diffusion

|

||||||

|

|

||||||

|

mkdir -p "${ROOT}/models/ldm/stable-diffusion-v1/"

|

||||||

|

ln -sf /cache/models/model.ckpt "${ROOT}/models/ldm/stable-diffusion-v1/model.ckpt"

|

||||||

|

|

||||||

|

if test -f /cache/models/GFPGANv1.3.pth; then

|

||||||

|

base="${ROOT}/src/gfpgan/experiments/pretrained_models/"

|

||||||

|

mkdir -p "${base}"

|

||||||

|

ln -sf /cache/models/GFPGANv1.3.pth "${base}/GFPGANv1.3.pth"

|

||||||

|

echo "Mounted GFPGANv1.3.pth"

|

||||||

|

fi

|

||||||

|

|

||||||

|

# facexlib

|

||||||

|

FACEX_WEIGHTS=/opt/conda/lib/python3.8/site-packages/facexlib/weights

|

||||||

|

|

||||||

|

rm -rf "${FACEX_WEIGHTS}"

|

||||||

|

mkdir -p /cache/weights

|

||||||

|

ln -sf -T /cache/weights "${FACEX_WEIGHTS}"

|

||||||

|

|

||||||

|

if "${PRELOAD}" == "true"; then

|

||||||

|

python3 -u scripts/preload_models.py

|

||||||

|

fi

|

||||||

Reference in New Issue

Block a user