mirror of

https://github.com/AbdBarho/stable-diffusion-webui-docker.git

synced 2025-10-27 08:14:26 -04:00

Compare commits

54 Commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

3c544dd7f4 | ||

|

|

42cc17da74 | ||

|

|

31e4dec08f | ||

|

|

0148e5e109 | ||

|

|

111825ac25 | ||

|

|

c1e13867d9 | ||

|

|

463f332d14 | ||

|

|

3682303355 | ||

|

|

402c691a49 | ||

|

|

b36113b7d8 | ||

|

|

b60c787474 | ||

|

|

161fd52c16 | ||

|

|

3b3c244c31 | ||

|

|

5698c49653 | ||

|

|

710280c7ab | ||

|

|

e1e03229fd | ||

|

|

79868d88e8 | ||

|

|

6f5eef42a7 | ||

|

|

14c4b36aff | ||

|

|

28f171e64d | ||

|

|

9af4a23ec4 | ||

|

|

24ecd676ab | ||

|

|

ef36c50cf9 | ||

|

|

43a5e5e85f | ||

|

|

5bbc21ea3d | ||

|

|

09366ed955 | ||

|

|

d4874e7c3a | ||

|

|

7638fb4e5e | ||

|

|

15a61a99d6 | ||

|

|

556a50f49b | ||

|

|

b899f4e516 | ||

|

|

a8c85b4699 | ||

|

|

a96285d10b | ||

|

|

83b78fe504 | ||

|

|

84f9cb84e7 | ||

|

|

6a66ff6abb | ||

|

|

59892da866 | ||

|

|

fceb83c2b0 | ||

|

|

17b01a7627 | ||

|

|

b96d7c30d0 | ||

|

|

aae83bb8f2 | ||

|

|

10763a8f61 | ||

|

|

64e8f093d2 | ||

|

|

3e0a137c23 | ||

|

|

a1c16942ff | ||

|

|

6ae3473214 | ||

|

|

5d731cb43c | ||

|

|

c1fa2f1457 | ||

|

|

d8cfdd3af5 | ||

|

|

03d12cbcd9 | ||

|

|

2e76b6c4e7 | ||

|

|

5eae2076ce | ||

|

|

725e1f39ba | ||

|

|

ab651fe0d7 |

5

.devscripts/chmod.sh

Executable file

5

.devscripts/chmod.sh

Executable file

@@ -0,0 +1,5 @@

|

||||

#!/bin/bash

|

||||

|

||||

set -Eeuo pipefail

|

||||

|

||||

find . -name "*.sh" -exec git update-index --chmod=+x {} \;

|

||||

28

.github/ISSUE_TEMPLATE/bug.md

vendored

28

.github/ISSUE_TEMPLATE/bug.md

vendored

@@ -7,12 +7,33 @@ assignees: ''

|

||||

|

||||

---

|

||||

|

||||

**Has this issue been opened before? Check the [FAQ](https://github.com/AbdBarho/stable-diffusion-webui-docker/wiki/Main), the [issues](https://github.com/AbdBarho/stable-diffusion-webui-docker/issues?q=is%3Aissue) and in [the issues in the WebUI repo](https://github.com/hlky/stable-diffusion-webui)**

|

||||

<!-- PLEASE FILL THIS OUT, IT WILL MAKE BOTH OF OUR LIVES EASIER -->

|

||||

|

||||

**Has this issue been opened before?**

|

||||

|

||||

- [ ] It is not in the [FAQ](https://github.com/AbdBarho/stable-diffusion-webui-docker/wiki/FAQ), I checked.

|

||||

- [ ] It is not in the [issues](https://github.com/AbdBarho/stable-diffusion-webui-docker/issues?q=), I searched.

|

||||

|

||||

|

||||

**Describe the bug**

|

||||

|

||||

<!-- tried to run the app, my cat exploded -->

|

||||

|

||||

|

||||

**Which UI**

|

||||

|

||||

hlky or auto or auto-cpu or lstein?

|

||||

|

||||

|

||||

**Hardware / Software**

|

||||

- OS: [e.g. Windows 10 / Ubuntu ]

|

||||

- OS version: <!-- on windows, use the command `winver` to find out, on ubuntu `lsb_release -d` -->

|

||||

- WSL version (if applicable): <!-- get using `wsl -l -v` -->

|

||||

- Docker Version: <!-- get using `docker version` -->

|

||||

- Docker compose version: <!-- get using `docker compose version` -->

|

||||

- Repo version: <!-- tag, commit sha, or "from master" -->

|

||||

- RAM:

|

||||

- GPU/VRAM:

|

||||

|

||||

**Steps to Reproduce**

|

||||

1. Go to '...'

|

||||

@@ -20,10 +41,5 @@ assignees: ''

|

||||

3. Scroll down to '....'

|

||||

4. See error

|

||||

|

||||

**Hardware / Software:**

|

||||

- OS: [e.g. Windows / Ubuntu and version]

|

||||

- GPU: [Nvidia 1660 / No GPU]

|

||||

- Version [e.g. 22]

|

||||

|

||||

**Additional context**

|

||||

Any other context about the problem here. If applicable, add screenshots to help explain your problem.

|

||||

|

||||

5

.github/pull_request_template.md

vendored

Normal file

5

.github/pull_request_template.md

vendored

Normal file

@@ -0,0 +1,5 @@

|

||||

### Update versions

|

||||

|

||||

- auto: https://github.com/AUTOMATIC1111/stable-diffusion-webui/commit/

|

||||

- hlky: https://github.com/sd-webui/stable-diffusion-webui/commit/

|

||||

- lstein: https://github.com/invoke-ai/InvokeAI/commit/

|

||||

29

.github/workflows/docker.yml

vendored

29

.github/workflows/docker.yml

vendored

@@ -1,24 +1,19 @@

|

||||

name: Build Image

|

||||

name: Build Images

|

||||

|

||||

on: [push]

|

||||

|

||||

# TODO: how to cache intermediate images?

|

||||

jobs:

|

||||

build_hlky:

|

||||

build:

|

||||

strategy:

|

||||

matrix:

|

||||

profile:

|

||||

- auto

|

||||

- hlky

|

||||

- lstein

|

||||

- download

|

||||

runs-on: ubuntu-latest

|

||||

name: hlky

|

||||

name: ${{ matrix.profile }}

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

- run: docker compose build --progress plain

|

||||

build_AUTOMATIC1111:

|

||||

runs-on: ubuntu-latest

|

||||

name: AUTOMATIC1111

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

- run: cd AUTOMATIC1111 && docker compose build --progress plain

|

||||

build_lstein:

|

||||

runs-on: ubuntu-latest

|

||||

name: lstein

|

||||

steps:

|

||||

- uses: actions/checkout@v3

|

||||

- run: cd lstein && docker compose build --progress plain

|

||||

# better caching?

|

||||

- run: docker compose --profile ${{ matrix.profile }} build --progress plain

|

||||

|

||||

22

.github/workflows/executable.yml1

vendored

22

.github/workflows/executable.yml1

vendored

@@ -1,22 +0,0 @@

|

||||

name: Check executable

|

||||

|

||||

on: [push]

|

||||

|

||||

jobs:

|

||||

check:

|

||||

runs-on: ubuntu-latest

|

||||

name: Check all sh

|

||||

steps:

|

||||

- run: git config --global core.fileMode true

|

||||

- uses: actions/checkout@v3

|

||||

- shell: bash

|

||||

run: |

|

||||

shopt -s globstar;

|

||||

FAIL=0

|

||||

for file in **/*.sh; do

|

||||

if [ -f "${file}" ] && [ -r "${file}" ] && [ ! -x "${file}" ]; then

|

||||

echo "$file" is not executable;

|

||||

FAIL=1

|

||||

fi

|

||||

done

|

||||

exit ${FAIL}

|

||||

20

.github/workflows/stale.yml

vendored

Normal file

20

.github/workflows/stale.yml

vendored

Normal file

@@ -0,0 +1,20 @@

|

||||

name: 'Close stale issues and PRs'

|

||||

on:

|

||||

schedule:

|

||||

- cron: '30 1 * * *'

|

||||

|

||||

jobs:

|

||||

stale:

|

||||

runs-on: ubuntu-latest

|

||||

steps:

|

||||

- uses: actions/stale@v5

|

||||

with:

|

||||

only-labels: awaiting-response

|

||||

stale-issue-message: This issue is stale because it has been open 14 days with no activity. Remove stale label or comment or this will be closed in 7 days.

|

||||

stale-pr-message: This PR is stale because it has been open 14 days with no activity. Remove stale label or comment or this will be closed in 7 days.

|

||||

close-issue-message: This issue was closed because it has been stalled for 7 days with no activity.

|

||||

close-pr-message: This PR was closed because it has been stalled for 7 days with no activity.

|

||||

days-before-issue-stale: 14

|

||||

days-before-pr-stale: 14

|

||||

days-before-issue-close: 7

|

||||

days-before-pr-close: 7

|

||||

36

.github/workflows/xformers.yml

vendored

Normal file

36

.github/workflows/xformers.yml

vendored

Normal file

@@ -0,0 +1,36 @@

|

||||

name: Build Xformers

|

||||

|

||||

on:

|

||||

workflow_dispatch: {}

|

||||

|

||||

jobs:

|

||||

build:

|

||||

runs-on: ubuntu-latest

|

||||

timeout-minutes: 180

|

||||

container:

|

||||

image: python:3.10-slim

|

||||

env:

|

||||

DEBIAN_FRONTEND: noninteractive

|

||||

XFORMERS_DISABLE_FLASH_ATTN: 1

|

||||

FORCE_CUDA: 1

|

||||

TORCH_CUDA_ARCH_LIST: "6.0;6.1;6.2;7.0;7.2;7.5;8.0;8.6"

|

||||

NVCC_FLAGS: --use_fast_math -DXFORMERS_MEM_EFF_ATTENTION_DISABLE_BACKWARD

|

||||

MAX_JOBS: 4

|

||||

steps:

|

||||

- run: |

|

||||

apt-get update

|

||||

apt-get install gpg wget git -y

|

||||

wget https://developer.download.nvidia.com/compute/cuda/repos/debian11/x86_64/cuda-keyring_1.0-1_all.deb

|

||||

dpkg -i cuda-keyring_1.0-1_all.deb

|

||||

apt-get update

|

||||

apt-get install cuda-nvcc-11-8 cuda-libraries-dev-11-8 -y

|

||||

|

||||

export PIP_CACHE_DIR=$(pwd)/cache

|

||||

pip install ninja install torch --extra-index-url https://download.pytorch.org/whl/cu113

|

||||

|

||||

pip wheel --wheel-dir=data git+https://github.com/facebookresearch/xformers.git@3633e1afc7bffbe61957f04e7bb1a742ee910ace#egg=xformers

|

||||

- name: Artifacts

|

||||

uses: actions/upload-artifact@v3

|

||||

with:

|

||||

name: xformers

|

||||

path: data/xformers-0.0.14.dev0-cp310-cp310-linux_x86_64.whl

|

||||

2

.gitignore

vendored

2

.gitignore

vendored

@@ -1,2 +1,2 @@

|

||||

/dev

|

||||

/.devcontainer

|

||||

/docker-compose.override.yml

|

||||

|

||||

@@ -1,61 +0,0 @@

|

||||

# syntax=docker/dockerfile:1

|

||||

|

||||

FROM alpine/git:2.36.2 as download

|

||||

RUN <<EOF

|

||||

# who knows

|

||||

git config --global http.postBuffer 1048576000

|

||||

git clone https://github.com/sczhou/CodeFormer.git repositories/CodeFormer

|

||||

git clone https://github.com/CompVis/stable-diffusion.git repositories/stable-diffusion

|

||||

git clone https://github.com/CompVis/taming-transformers.git repositories/taming-transformers

|

||||

rm -rf repositories/taming-transformers/data repositories/taming-transformers/assets

|

||||

EOF

|

||||

|

||||

|

||||

FROM continuumio/miniconda3:4.12.0

|

||||

|

||||

SHELL ["/bin/bash", "-ceuxo", "pipefail"]

|

||||

|

||||

ENV DEBIAN_FRONTEND=noninteractive

|

||||

|

||||

RUN conda install python=3.8.5 && conda clean -a -y

|

||||

RUN conda install pytorch==1.11.0 torchvision==0.12.0 cudatoolkit=11.3 -c pytorch && conda clean -a -y

|

||||

|

||||

RUN apt-get update && apt install fonts-dejavu-core rsync -y && apt-get clean

|

||||

|

||||

|

||||

RUN <<EOF

|

||||

git clone https://github.com/AUTOMATIC1111/stable-diffusion-webui.git

|

||||

cd stable-diffusion-webui

|

||||

git reset --hard 13eec4f3d4081fdc43883c5ef02e471a2b6c7212

|

||||

conda env update --file environment-wsl2.yaml -n base

|

||||

conda clean -a -y

|

||||

pip install --prefer-binary --no-cache-dir -r requirements.txt

|

||||

EOF

|

||||

|

||||

ENV ROOT=/stable-diffusion-webui \

|

||||

WORKDIR=/stable-diffusion-webui/repositories/stable-diffusion

|

||||

|

||||

COPY --from=download /git/ ${ROOT}

|

||||

RUN pip install --prefer-binary --no-cache-dir -r ${ROOT}/repositories/CodeFormer/requirements.txt

|

||||

|

||||

# Note: don't update the sha of previous versions because the install will take forever

|

||||

# instead, update the repo state in a later step

|

||||

ARG SHA=06fadd2dc5c2753558a9f3971568c2673819f48c

|

||||

RUN <<EOF

|

||||

cd stable-diffusion-webui

|

||||

git pull

|

||||

git reset --hard ${SHA}

|

||||

pip install --prefer-binary --no-cache-dir -r requirements.txt

|

||||

EOF

|

||||

|

||||

RUN pip install --prefer-binary -U --no-cache-dir opencv-python-headless markupsafe==2.0.1

|

||||

|

||||

ENV TRANSFORMERS_CACHE=/cache/transformers TORCH_HOME=/cache/torch CLI_ARGS=""

|

||||

|

||||

COPY . /docker

|

||||

RUN chmod +x /docker/mount.sh && python3 /docker/info.py ${ROOT}/modules/ui.py

|

||||

|

||||

WORKDIR ${WORKDIR}

|

||||

EXPOSE 7860

|

||||

# run, -u to not buffer stdout / stderr

|

||||

CMD /docker/mount.sh && python3 -u ../../webui.py --listen --port 7860 ${CLI_ARGS}

|

||||

@@ -1,14 +0,0 @@

|

||||

# WebUI for AUTOMATIC1111

|

||||

|

||||

The WebUI of [AUTOMATIC1111/stable-diffusion-webui](https://github.com/AUTOMATIC1111/stable-diffusion-webui) as docker container!

|

||||

|

||||

## Setup

|

||||

|

||||

Clone this repo, download the `model.ckpt` and `GFPGANv1.3.pth` and put into the `models` folder as mentioned in [the main README](../README.md), then run

|

||||

|

||||

```

|

||||

cd AUTOMATIC1111

|

||||

docker compose up --build

|

||||

```

|

||||

|

||||

You can change the cli parameters in `AUTOMATIC1111/docker-compose.yml`. The full list of cil parameters can be found [here](https://github.com/AUTOMATIC1111/stable-diffusion-webui/blob/master/modules/shared.py)

|

||||

@@ -1 +0,0 @@

|

||||

{"outdir_samples": "/output", "outdir_txt2img_samples": "/output/txt2img-images", "outdir_img2img_samples": "/output/img2img-images", "outdir_extras_samples": "/output/extras-images", "outdir_txt2img_grids": "/output/txt2img-grids", "outdir_img2img_grids": "/output/img2img-grids", "outdir_save": "/output/saved", "__WARNING__": "DON'T CHANGE ANYTHING BEFORE THIS", "outdir_grids": "", "save_to_dirs": false, "save_to_dirs_prompt_len": 10, "samples_save": true, "samples_format": "png", "grid_save": true, "return_grid": true, "grid_format": "png", "grid_extended_filename": false, "grid_only_if_multiple": true, "n_rows": -1, "jpeg_quality": 80, "export_for_4chan": true, "enable_pnginfo": true, "font": "DejaVuSans.ttf", "enable_emphasis": true, "save_txt": false, "ESRGAN_tile": 192, "ESRGAN_tile_overlap": 8, "random_artist_categories": [], "upscale_at_full_resolution_padding": 16, "show_progressbar": true, "show_progress_every_n_steps": 0, "multiple_tqdm": true, "face_restoration_model": "CodeFormer", "code_former_weight": 0.5}

|

||||

@@ -1,21 +0,0 @@

|

||||

version: '3.9'

|

||||

|

||||

services:

|

||||

model:

|

||||

build: .

|

||||

ports:

|

||||

- "7860:7860"

|

||||

volumes:

|

||||

- ../cache:/cache

|

||||

- ../output:/output

|

||||

- ../models:/models

|

||||

- ./config.json:/docker/config.json

|

||||

environment:

|

||||

- CLI_ARGS=--medvram --opt-split-attention

|

||||

deploy:

|

||||

resources:

|

||||

reservations:

|

||||

devices:

|

||||

- driver: nvidia

|

||||

device_ids: ['0']

|

||||

capabilities: [gpu]

|

||||

@@ -1,34 +0,0 @@

|

||||

#!/bin/bash

|

||||

|

||||

set -e

|

||||

|

||||

declare -A MODELS

|

||||

|

||||

MODELS["${WORKDIR}/models/ldm/stable-diffusion-v1/model.ckpt"]=model.ckpt

|

||||

MODELS["${ROOT}/GFPGANv1.3.pth"]=GFPGANv1.3.pth

|

||||

|

||||

for path in "${!MODELS[@]}"; do

|

||||

name=${MODELS[$path]}

|

||||

base=$(dirname "${path}")

|

||||

from_path="/models/${name}"

|

||||

if test -f "${from_path}"; then

|

||||

mkdir -p "${base}" && ln -sf "${from_path}" "${path}" && echo "Mounted ${name}"

|

||||

else

|

||||

echo "Skipping ${name}"

|

||||

fi

|

||||

done

|

||||

|

||||

# force realesrgan cache

|

||||

rm -rf /opt/conda/lib/python3.8/site-packages/realesrgan/weights

|

||||

ln -s -T /models /opt/conda/lib/python3.8/site-packages/realesrgan/weights

|

||||

|

||||

# force facexlib cache

|

||||

mkdir -p /cache/weights/ ${WORKDIR}/gfpgan/

|

||||

ln -sf /cache/weights/ ${WORKDIR}/gfpgan/

|

||||

# code former cache

|

||||

rm -rf ${ROOT}/repositories/CodeFormer/weights/CodeFormer ${ROOT}/repositories/CodeFormer/weights/facelib

|

||||

ln -sf -T /cache/weights ${ROOT}/repositories/CodeFormer/weights/CodeFormer

|

||||

ln -sf -T /cache/weights ${ROOT}/repositories/CodeFormer/weights/facelib

|

||||

|

||||

# mount config

|

||||

ln -sf /docker/config.json ${WORKDIR}/config.json

|

||||

15

LICENSE

15

LICENSE

@@ -86,4 +86,17 @@ administration of justice, law enforcement, immigration or asylum

|

||||

processes, such as predicting an individual will commit fraud/crime

|

||||

commitment (e.g. by text profiling, drawing causal relationships between

|

||||

assertions made in documents, indiscriminate and arbitrarily-targeted

|

||||

use).

|

||||

use).

|

||||

|

||||

|

||||

|

||||

By using this software, you also agree to the following licenses:

|

||||

https://github.com/CompVis/stable-diffusion/blob/main/LICENSE

|

||||

https://github.com/sd-webui/stable-diffusion-webui/blob/master/LICENSE

|

||||

https://github.com/invoke-ai/InvokeAI/blob/main/LICENSE

|

||||

https://github.com/cszn/BSRGAN/blob/main/LICENSE

|

||||

https://github.com/sczhou/CodeFormer/blob/master/LICENSE

|

||||

https://github.com/TencentARC/GFPGAN/blob/master/LICENSE

|

||||

https://github.com/xinntao/Real-ESRGAN/blob/master/LICENSE

|

||||

https://github.com/xinntao/ESRGAN/blob/master/LICENSE

|

||||

https://github.com/cszn/SCUNet/blob/main/LICENSE

|

||||

|

||||

95

README.md

95

README.md

@@ -2,84 +2,74 @@

|

||||

|

||||

Run Stable Diffusion on your machine with a nice UI without any hassle!

|

||||

|

||||

This repository provides the [WebUI](https://github.com/hlky/stable-diffusion-webui) as a docker image for easy setup and deployment.

|

||||

|

||||

Now with experimental support for 2 other forks:

|

||||

|

||||

- [AUTOMATIC1111](./AUTOMATIC1111/) (Stable, very few bugs!)

|

||||

- [lstein](./lstein/)

|

||||

|

||||

NOTE: big update coming up!

|

||||

This repository provides multiple UIs for you to play around with stable diffusion:

|

||||

|

||||

## Features

|

||||

|

||||

- Interactive UI with many features, and more on the way!

|

||||

- Support for 6GB GPU cards.

|

||||

- GFPGAN for face reconstruction, RealESRGAN for super-sampling.

|

||||

- Experimental:

|

||||

- Latent Diffusion Super Resolution

|

||||

- GoBig

|

||||

- GoLatent

|

||||

- many more!

|

||||

### AUTOMATIC1111

|

||||

|

||||

## Setup

|

||||

[AUTOMATIC1111's fork](https://github.com/AUTOMATIC1111/stable-diffusion-webui) is imho the most feature rich yet elegant UI:

|

||||

|

||||

Make sure you have an **up to date** version of docker installed. Download this repo and run:

|

||||

- Text to image, with many samplers and even negative prompts!

|

||||

- Image to image, with masking, cropping, in-painting, out-painting, variations.

|

||||

- GFPGAN, RealESRGAN, LDSR, CodeFormer.

|

||||

- Loopback, prompt weighting, prompt matrix, X/Y plot

|

||||

- Live preview of the generated images.

|

||||

- Highly optimized 4GB GPU support, or even CPU only!

|

||||

- Textual inversion allows you to use pretrained textual inversion embeddings

|

||||

- [Full feature list here](https://github.com/AUTOMATIC1111/stable-diffusion-webui-feature-showcase)

|

||||

|

||||

```

|

||||

docker compose build

|

||||

```

|

||||

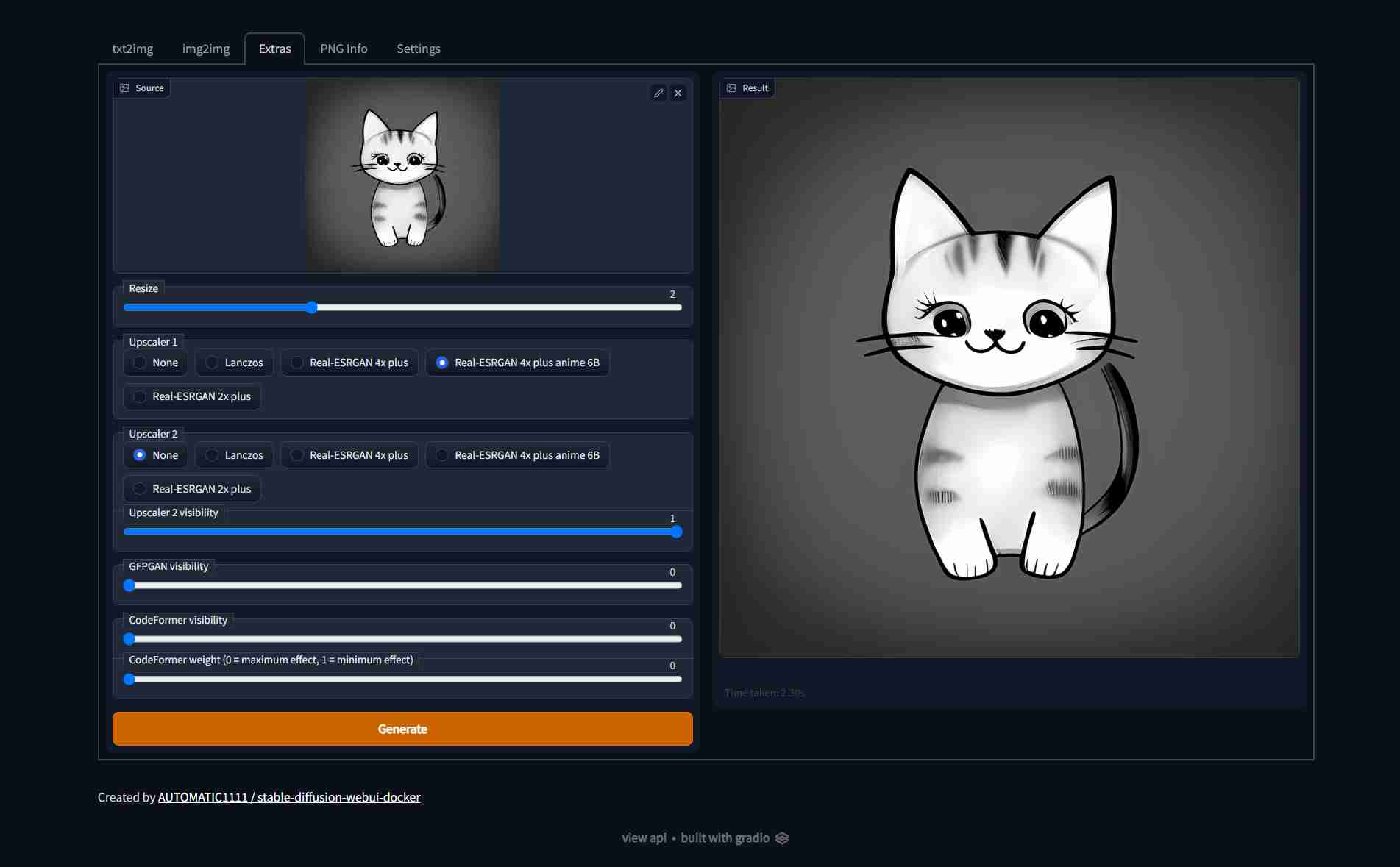

| Text to image | Image to image | Extras |

|

||||

| ---------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------- |

|

||||

|  |  |  |

|

||||

|

||||

you can let it build in the background while you download the different models

|

||||

### hlky (sd-webui)

|

||||

|

||||

- [Stable Diffusion v1.4 (4GB)](https://www.googleapis.com/storage/v1/b/aai-blog-files/o/sd-v1-4.ckpt?alt=media), rename to `model.ckpt`

|

||||

- (Optional) [GFPGANv1.3.pth (333MB)](https://github.com/TencentARC/GFPGAN/releases/download/v1.3.0/GFPGANv1.3.pth).

|

||||

- (Optional) [RealESRGAN_x4plus.pth (64MB)](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth) and [RealESRGAN_x4plus_anime_6B.pth (18MB)](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth).

|

||||

- (Optional) [LDSR (2GB)](https://heibox.uni-heidelberg.de/f/578df07c8fc04ffbadf3/?dl=1) and [its configuration](https://heibox.uni-heidelberg.de/f/31a76b13ea27482981b4/?dl=1), rename to `LDSR.ckpt` and `LDSR.yaml` respectively.

|

||||

<!-- - (Optional) [RealESRGAN_x2plus.pth (64MB)](https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.1/RealESRGAN_x2plus.pth)

|

||||

- TODO: (I still need to find the RealESRGAN_x2plus_6b.pth) -->

|

||||

[hlky's fork](https://github.com/sd-webui/stable-diffusion-webui) is one of the most popular UIs, with many features:

|

||||

|

||||

Put all of the downloaded files in the `models` folder, it should look something like this:

|

||||

- Text to image, with many samplers

|

||||

- Image to image, with masking, cropping, in-painting, variations.

|

||||

- GFPGAN, RealESRGAN, LDSR, GoBig, GoLatent

|

||||

- Loopback, prompt weighting

|

||||

- 6GB or even 4GB GPU support!

|

||||

- [Full feature list here](https://github.com/sd-webui/stable-diffusion-webui/blob/master/README.md)

|

||||

|

||||

```

|

||||

models/

|

||||

├── model.ckpt

|

||||

├── GFPGANv1.3.pth

|

||||

├── RealESRGAN_x4plus.pth

|

||||

├── RealESRGAN_x4plus_anime_6B.pth

|

||||

├── LDSR.ckpt

|

||||

└── LDSR.yaml

|

||||

```

|

||||

Screenshots:

|

||||

|

||||

## Run

|

||||

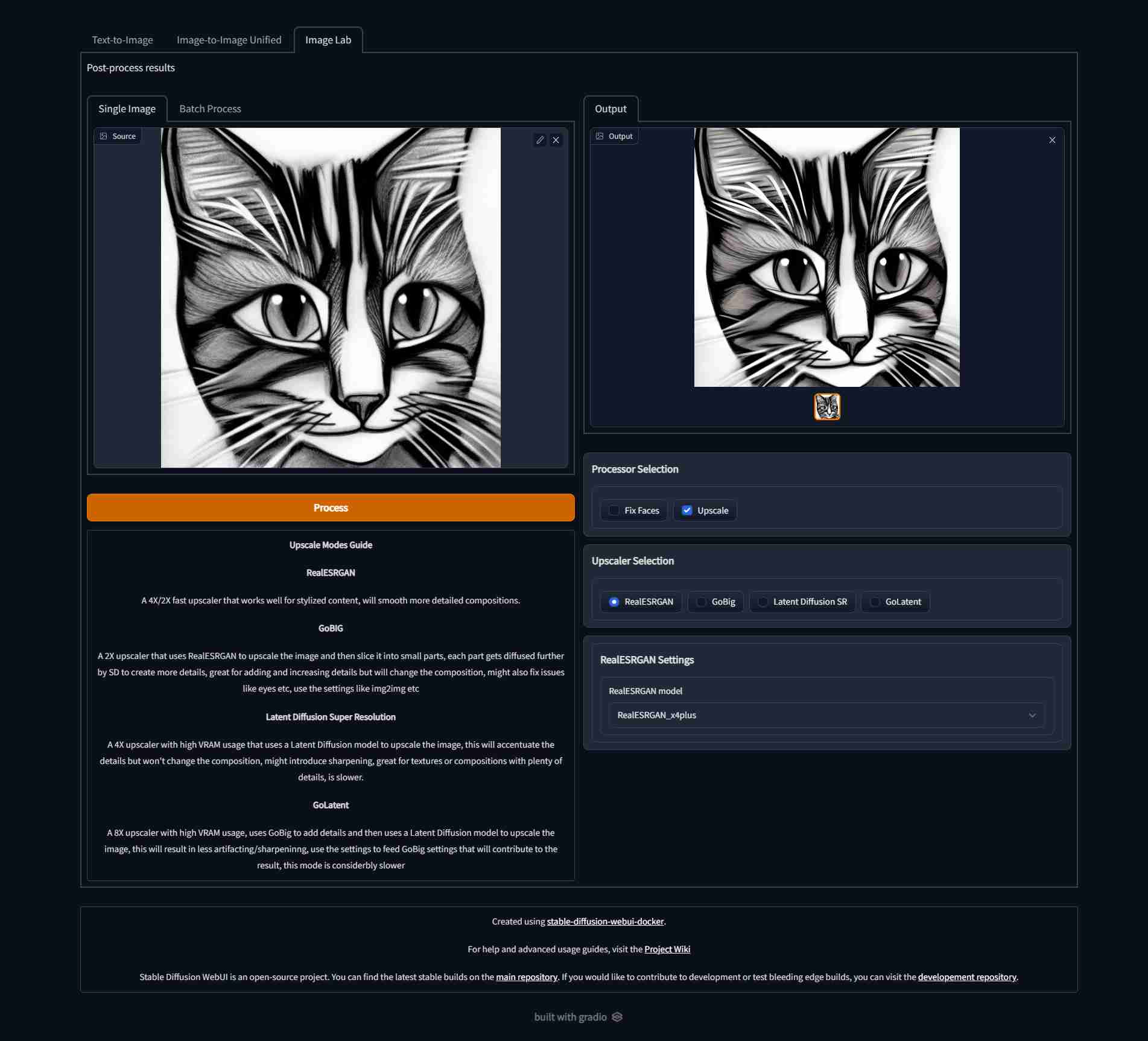

| Text to image | Image to image | Image Lab |

|

||||

| ---------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------- |

|

||||

|  |  |  |

|

||||

|

||||

After the build is done, you can run the app with:

|

||||

|

||||

```

|

||||

docker compose up --build

|

||||

```

|

||||

|

||||

Will start the app on http://localhost:7860/

|

||||

### lstein (InvokeAI)

|

||||

|

||||

Note: the first start will take sometime as some other models will be downloaded, these will be cached in the `cache` folder, so next runs are faster.

|

||||

[lstein's fork](https://github.com/invoke-ai/InvokeAI) is one of the earliest with a wonderful WebUI.

|

||||

- Text to image, with many samplers

|

||||

- Image to image

|

||||

- 4GB GPU support

|

||||

- More coming!

|

||||

- [Full feature list here](https://github.com/invoke-ai/InvokeAI#features)

|

||||

|

||||

### FAQ

|

||||

| Text to image | Image to image | Extras |

|

||||

| ---------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------- |

|

||||

|  |  |  |

|

||||

|

||||

You can find fixes to common issues [in the wiki page.](https://github.com/AbdBarho/stable-diffusion-webui-docker/wiki/FAQ)

|

||||

## Setup & Usage

|

||||

|

||||

## Config

|

||||

Visit the wiki for [Setup](https://github.com/AbdBarho/stable-diffusion-webui-docker/wiki/Setup) and [Usage](https://github.com/AbdBarho/stable-diffusion-webui-docker/wiki/Usage) instructions, checkout the [FAQ](https://github.com/AbdBarho/stable-diffusion-webui-docker/wiki/FAQ) page if you face any problems, or create a new issue!

|

||||

|

||||

in the `docker-compose.yml` you can change the `CLI_ARGS` variable, which contains the arguments that will be passed to the WebUI. By default: `--extra-models-cpu --optimized-turbo` are given, which allow you to use this model on a 6GB GPU. However, some features might not be available in the mode. [You can find the full list of arguments here.](https://github.com/hlky/stable-diffusion-webui/blob/2b1ac8daf7ea82c6c56eabab7e80ec1c33106a98/scripts/webui.py)

|

||||

## Contributing

|

||||

|

||||

You can set the `WEBUI_SHA` to [any SHA from the main repo](https://github.com/hlky/stable-diffusion/commits/main), this will build the container against that commit. Use at your own risk.

|

||||

Contributions are welcome! create an issue first of what you want to contribute (before you implement anything) so we can talk about it.

|

||||

|

||||

# Disclaimer

|

||||

## Disclaimer

|

||||

|

||||

The authors of this project are not responsible for any content generated using this interface.

|

||||

|

||||

This license of this software forbids you from sharing any content that violates any laws, produce any harm to a person, disseminate any personal information that would be meant for harm, spread misinformation and target vulnerable groups. For the full list of restrictions please read [the license](./LICENSE).

|

||||

|

||||

# Thanks

|

||||

## Thanks

|

||||

|

||||

Special thanks to everyone behind these awesome projects, without them, none of this would have been possible:

|

||||

|

||||

@@ -89,3 +79,4 @@ Special thanks to everyone behind these awesome projects, without them, none of

|

||||

- [CompVis/stable-diffusion](https://github.com/CompVis/stable-diffusion)

|

||||

- [hlky/sd-enable-textual-inversion](https://github.com/hlky/sd-enable-textual-inversion)

|

||||

- [devilismyfriend/latent-diffusion](https://github.com/devilismyfriend/latent-diffusion)

|

||||

- [Hafiidz/latent-diffusion](https://github.com/Hafiidz/latent-diffusion)

|

||||

|

||||

3

cache/.gitignore

vendored

3

cache/.gitignore

vendored

@@ -1,3 +0,0 @@

|

||||

/torch

|

||||

/transformers

|

||||

/weights

|

||||

17

data/.gitignore

vendored

Normal file

17

data/.gitignore

vendored

Normal file

@@ -0,0 +1,17 @@

|

||||

# for all of the stuff downloaded by transformers, pytorch, and others

|

||||

/.cache

|

||||

# for UIs

|

||||

/config

|

||||

# for all stable diffusion models (main, waifu diffusion, etc..)

|

||||

/StableDiffusion

|

||||

# others

|

||||

/Codeformer

|

||||

/GFPGAN

|

||||

/ESRGAN

|

||||

/BSRGAN

|

||||

/RealESRGAN

|

||||

/SwinIR

|

||||

/ScuNET

|

||||

/LDSR

|

||||

/Hypernetworks

|

||||

/embeddings

|

||||

@@ -1,22 +1,11 @@

|

||||

version: '3.9'

|

||||

|

||||

services:

|

||||

model:

|

||||

build:

|

||||

context: ./hlky/

|

||||

args:

|

||||

# You can choose any commit sha from https://github.com/hlky/stable-diffusion/commits/main

|

||||

# USE AT YOUR OWN RISK! otherwise just leave it empty.

|

||||

BRANCH:

|

||||

WEBUI_SHA:

|

||||

x-base_service: &base_service

|

||||

ports:

|

||||

- "7860:7860"

|

||||

volumes:

|

||||

- ./cache:/cache

|

||||

- ./output:/output

|

||||

- ./models:/models

|

||||

environment:

|

||||

- CLI_ARGS=--extra-models-cpu --optimized-turbo

|

||||

- &v1 ./data:/data

|

||||

- &v2 ./output:/output

|

||||

deploy:

|

||||

resources:

|

||||

reservations:

|

||||

@@ -24,3 +13,45 @@ services:

|

||||

- driver: nvidia

|

||||

device_ids: ['0']

|

||||

capabilities: [gpu]

|

||||

|

||||

name: webui-docker

|

||||

|

||||

services:

|

||||

download:

|

||||

build: ./services/download/

|

||||

profiles: ["download"]

|

||||

volumes:

|

||||

- *v1

|

||||

|

||||

hlky:

|

||||

<<: *base_service

|

||||

profiles: ["hlky"]

|

||||

build: ./services/hlky/

|

||||

image: sd-hlky:1

|

||||

environment:

|

||||

- CLI_ARGS=--optimized-turbo

|

||||

- USE_STREAMLIT=0

|

||||

|

||||

auto: &automatic

|

||||

<<: *base_service

|

||||

profiles: ["auto"]

|

||||

build: ./services/AUTOMATIC1111

|

||||

image: sd-auto:1

|

||||

environment:

|

||||

- CLI_ARGS=--allow-code --medvram --xformers

|

||||

|

||||

auto-cpu:

|

||||

<<: *automatic

|

||||

profiles: ["auto-cpu"]

|

||||

deploy: {}

|

||||

environment:

|

||||

- CLI_ARGS=--no-half --precision full

|

||||

|

||||

lstein:

|

||||

<<: *base_service

|

||||

profiles: ["lstein"]

|

||||

build: ./services/lstein/

|

||||

image: sd-lstein:1

|

||||

environment:

|

||||

- PRELOAD=true

|

||||

- CLI_ARGS=

|

||||

|

||||

@@ -1,65 +0,0 @@

|

||||

# syntax=docker/dockerfile:1

|

||||

|

||||

FROM continuumio/miniconda3:4.12.0

|

||||

|

||||

SHELL ["/bin/bash", "-ceuxo", "pipefail"]

|

||||

|

||||

RUN conda install python=3.8.5 && conda clean -a -y

|

||||

RUN conda install pytorch==1.11.0 torchvision==0.12.0 cudatoolkit=11.3 -c pytorch && conda clean -a -y

|

||||

|

||||

RUN apt-get update && apt install fonts-dejavu-core rsync -y && apt-get clean

|

||||

|

||||

|

||||

RUN <<EOF

|

||||

git clone https://github.com/sd-webui/stable-diffusion-webui.git stable-diffusion

|

||||

cd stable-diffusion

|

||||

git reset --hard 2b1ac8daf7ea82c6c56eabab7e80ec1c33106a98

|

||||

conda env update --file environment.yaml -n base

|

||||

conda clean -a -y

|

||||

EOF

|

||||

|

||||

# new dependency, should be added to the environment.yaml

|

||||

RUN pip install -U --no-cache-dir pyperclip

|

||||

|

||||

# Note: don't update the sha of previous versions because the install will take forever

|

||||

# instead, update the repo state in a later step

|

||||

ARG BRANCH=dev

|

||||

ARG WEBUI_SHA=be2ece06837e37d90181a17340c7e1aac91ba4fb

|

||||

RUN <<EOF

|

||||

cd stable-diffusion

|

||||

git fetch

|

||||

git checkout ${BRANCH}

|

||||

git reset --hard ${WEBUI_SHA}

|

||||

conda env update --file environment.yaml -n base

|

||||

conda clean -a -y

|

||||

EOF

|

||||

|

||||

# Textual inversion

|

||||

RUN <<EOF

|

||||

git clone https://github.com/hlky/sd-enable-textual-inversion.git &&

|

||||

cd /sd-enable-textual-inversion && git reset --hard 08f9b5046552d17cf7327b30a98410222741b070 &&

|

||||

rsync -a /sd-enable-textual-inversion/ /stable-diffusion/ &&

|

||||

rm -rf /sd-enable-textual-inversion

|

||||

EOF

|

||||

|

||||

# Latent diffusion

|

||||

RUN <<EOF

|

||||

git clone https://github.com/devilismyfriend/latent-diffusion &&

|

||||

cd /latent-diffusion &&

|

||||

git reset --hard 6d61fc03f15273a457950f2cdc10dddf53ba6809 &&

|

||||

# hacks all the way down

|

||||

mv ldm ldm_latent &&

|

||||

sed -i -- 's/from ldm/from ldm_latent/g' *.py

|

||||

# dont forget to update the yaml!!

|

||||

EOF

|

||||

|

||||

|

||||

# add info

|

||||

COPY . /docker/

|

||||

RUN python /docker/info.py /stable-diffusion/frontend/frontend.py && chmod +x /docker/mount.sh

|

||||

|

||||

WORKDIR /stable-diffusion

|

||||

ENV TRANSFORMERS_CACHE=/cache/transformers TORCH_HOME=/cache/torch CLI_ARGS=""

|

||||

EXPOSE 7860

|

||||

# run, -u to not buffer stdout / stderr

|

||||

CMD /docker/mount.sh && python3 -u scripts/webui.py --outdir /output --ckpt /models/model.ckpt --ldsr-dir /latent-diffusion ${CLI_ARGS}

|

||||

@@ -1,30 +0,0 @@

|

||||

#!/bin/bash

|

||||

|

||||

set -e

|

||||

|

||||

declare -A MODELS

|

||||

MODELS["/stable-diffusion/src/gfpgan/experiments/pretrained_models/GFPGANv1.3.pth"]=GFPGANv1.3.pth

|

||||

MODELS["/stable-diffusion/src/realesrgan/experiments/pretrained_models/RealESRGAN_x4plus.pth"]=RealESRGAN_x4plus.pth

|

||||

MODELS["/stable-diffusion/src/realesrgan/experiments/pretrained_models/RealESRGAN_x4plus_anime_6B.pth"]=RealESRGAN_x4plus_anime_6B.pth

|

||||

MODELS["/latent-diffusion/experiments/pretrained_models/model.ckpt"]=LDSR.ckpt

|

||||

# MODELS["/latent-diffusion/experiments/pretrained_models/project.yaml"]=LDSR.yaml

|

||||

|

||||

for path in "${!MODELS[@]}"; do

|

||||

name=${MODELS[$path]}

|

||||

base=$(dirname "${path}")

|

||||

from_path="/models/${name}"

|

||||

if test -f "${from_path}"; then

|

||||

mkdir -p "${base}" && ln -sf "${from_path}" "${path}" && echo "Mounted ${name}"

|

||||

else

|

||||

echo "Skipping ${name}"

|

||||

fi

|

||||

done

|

||||

|

||||

# hack for latent-diffusion

|

||||

if test -f /models/LDSR.yaml; then

|

||||

sed 's/ldm\./ldm_latent\./g' /models/LDSR.yaml >/latent-diffusion/experiments/pretrained_models/project.yaml

|

||||

fi

|

||||

|

||||

# force facexlib cache

|

||||

mkdir -p /cache/weights/ /stable-diffusion/gfpgan/

|

||||

ln -sf /cache/weights/ /stable-diffusion/gfpgan/

|

||||

@@ -1,29 +0,0 @@

|

||||

# syntax=docker/dockerfile:1

|

||||

|

||||

FROM continuumio/miniconda3:4.12.0

|

||||

|

||||

SHELL ["/bin/bash", "-ceuxo", "pipefail"]

|

||||

|

||||

RUN conda install python=3.8.5 && conda clean -a -y

|

||||

RUN conda install pytorch==1.11.0 torchvision==0.12.0 cudatoolkit=11.3 -c pytorch && conda clean -a -y

|

||||

|

||||

RUN apt-get update && apt install fonts-dejavu-core rsync -y && apt-get clean

|

||||

|

||||

|

||||

RUN <<EOF

|

||||

git clone https://github.com/lstein/stable-diffusion.git

|

||||

cd stable-diffusion

|

||||

git reset --hard 751283a2de81bee4bb571fbabe4adb19f1d85b97

|

||||

conda env update --file environment.yaml -n base

|

||||

conda clean -a -y

|

||||

EOF

|

||||

|

||||

ENV TRANSFORMERS_CACHE=/cache/transformers TORCH_HOME=/cache/torch CLI_ARGS=""

|

||||

|

||||

WORKDIR /stable-diffusion

|

||||

|

||||

EXPOSE 7860

|

||||

# run, -u to not buffer stdout / stderr

|

||||

CMD mkdir -p /stable-diffusion/models/ldm/stable-diffusion-v1/ && \

|

||||

ln -sf /models/model.ckpt /stable-diffusion/models/ldm/stable-diffusion-v1/model.ckpt && \

|

||||

python3 -u scripts/dream.py --outdir /output --web --host 0.0.0.0 --port 7860 ${CLI_ARGS}

|

||||

@@ -1,14 +0,0 @@

|

||||

# WebUI for lstein

|

||||

|

||||

The WebUI of [lstein/stable-diffusion](https://github.com/lstein/stable-diffusion) as docker container!

|

||||

|

||||

Although it is a simple UI, the project has a lot of potential.

|

||||

|

||||

## Setup

|

||||

|

||||

Clone this repo, download the `model.ckpt` and put into the `models` folder as mentioned in [the main README](../README.md), then run

|

||||

|

||||

```

|

||||

cd lstein

|

||||

docker compose up --build

|

||||

```

|

||||

@@ -1,20 +0,0 @@

|

||||

version: '3.9'

|

||||

|

||||

services:

|

||||

model:

|

||||

build: .

|

||||

ports:

|

||||

- "7860:7860"

|

||||

volumes:

|

||||

- ../cache:/cache

|

||||

- ../output:/output

|

||||

- ../models:/models

|

||||

environment:

|

||||

- CLI_ARGS=

|

||||

deploy:

|

||||

resources:

|

||||

reservations:

|

||||

devices:

|

||||

- driver: nvidia

|

||||

device_ids: ['0']

|

||||

capabilities: [gpu]

|

||||

7

models/.gitignore

vendored

7

models/.gitignore

vendored

@@ -1,7 +0,0 @@

|

||||

/model.ckpt

|

||||

/GFPGANv1.3.pth

|

||||

/RealESRGAN_x2plus.pth

|

||||

/RealESRGAN_x4plus.pth

|

||||

/RealESRGAN_x4plus_anime_6B.pth

|

||||

/LDSR.ckpt

|

||||

/LDSR.yaml

|

||||

30

scripts/migratev1tov2.sh

Executable file

30

scripts/migratev1tov2.sh

Executable file

@@ -0,0 +1,30 @@

|

||||

mkdir -p data/.cache data/StableDiffusion data/Codeformer data/GFPGAN data/ESRGAN data/BSRGAN data/RealESRGAN data/SwinIR data/LDSR data/embeddings

|

||||

|

||||

cp -vf cache/models/model.ckpt data/StableDiffusion/model.ckpt

|

||||

|

||||

cp -vf cache/models/LDSR.ckpt data/LDSR/model.ckpt

|

||||

cp -vf cache/models/LDSR.yaml data/LDSR/project.yaml

|

||||

|

||||

cp -vf cache/models/RealESRGAN_x4plus.pth data/RealESRGAN/

|

||||

cp -vf cache/models/RealESRGAN_x4plus_anime_6B.pth data/RealESRGAN/

|

||||

|

||||

cp -vrf cache/torch data/.cache/

|

||||

|

||||

mkdir -p data/.cache/huggingface/transformers/

|

||||

cp -vrf cache/transformers/* data/.cache/huggingface/transformers/

|

||||

|

||||

cp -v cache/custom-models/* data/StableDiffusion/

|

||||

|

||||

mkdir -p data/.cache/clip/

|

||||

cp -vf cache/weights/ViT-L-14.pt data/.cache/clip/

|

||||

|

||||

cp -vf cache/weights/codeformer.pth data/Codeformer/codeformer-v0.1.0.pth

|

||||

|

||||

cp -vf cache/weights/detection_Resnet50_Final.pth data/.cache/

|

||||

cp -vf cache/weights/parsing_parsenet.pth data/.cache/

|

||||

|

||||

cp -v embeddings/* data/embeddings/

|

||||

|

||||

echo this script was created 10/2022

|

||||

echo Dont forget to run: docker compose --profile download up --build

|

||||

echo the cache and embeddings folders can be deleted, but its not necessary.

|

||||

87

services/AUTOMATIC1111/Dockerfile

Normal file

87

services/AUTOMATIC1111/Dockerfile

Normal file

@@ -0,0 +1,87 @@

|

||||

# syntax=docker/dockerfile:1

|

||||

|

||||

FROM alpine/git:2.36.2 as download

|

||||

|

||||

SHELL ["/bin/sh", "-ceuxo", "pipefail"]

|

||||

|

||||

RUN git clone https://github.com/CompVis/stable-diffusion.git repositories/stable-diffusion && cd repositories/stable-diffusion && git reset --hard 69ae4b35e0a0f6ee1af8bb9a5d0016ccb27e36dc

|

||||

|

||||

RUN git clone https://github.com/sczhou/CodeFormer.git repositories/CodeFormer && cd repositories/CodeFormer && git reset --hard c5b4593074ba6214284d6acd5f1719b6c5d739af

|

||||

RUN git clone https://github.com/salesforce/BLIP.git repositories/BLIP && cd repositories/BLIP && git reset --hard 48211a1594f1321b00f14c9f7a5b4813144b2fb9

|

||||

|

||||

RUN <<EOF

|

||||

# because taming-transformers is huge

|

||||

git config --global http.postBuffer 1048576000

|

||||

git clone https://github.com/CompVis/taming-transformers.git repositories/taming-transformers

|

||||

cd repositories/taming-transformers

|

||||

git reset --hard 24268930bf1dce879235a7fddd0b2355b84d7ea6

|

||||

rm -rf data assets

|

||||

EOF

|

||||

|

||||

RUN git clone https://github.com/crowsonkb/k-diffusion.git repositories/k-diffusion && cd repositories/k-diffusion && git reset --hard f4e99857772fc3a126ba886aadf795a332774878

|

||||

|

||||

|

||||

FROM alpine:3 as xformers

|

||||

RUN apk add aria2

|

||||

RUN aria2c --dir / --out wheel.whl 'https://github.com/AbdBarho/stable-diffusion-webui-docker/releases/download/2.1.0/xformers-0.0.14.dev0-cp310-cp310-linux_x86_64.whl'

|

||||

|

||||

FROM python:3.10-slim

|

||||

|

||||

SHELL ["/bin/bash", "-ceuxo", "pipefail"]

|

||||

|

||||

ENV DEBIAN_FRONTEND=noninteractive PIP_PREFER_BINARY=1 PIP_NO_CACHE_DIR=1

|

||||

|

||||

RUN pip install torch==1.12.1+cu113 torchvision==0.13.1+cu113 --extra-index-url https://download.pytorch.org/whl/cu113

|

||||

|

||||

RUN apt-get update && apt install fonts-dejavu-core rsync git -y && apt-get clean

|

||||

|

||||

|

||||

RUN <<EOF

|

||||

git clone https://github.com/AUTOMATIC1111/stable-diffusion-webui.git

|

||||

cd stable-diffusion-webui

|

||||

git reset --hard 1eb588cbf19924333b88beaa1ac0041904966640

|

||||

pip install -r requirements_versions.txt

|

||||

EOF

|

||||

|

||||

ENV ROOT=/stable-diffusion-webui \

|

||||

WORKDIR=/stable-diffusion-webui/repositories/stable-diffusion

|

||||

|

||||

|

||||

COPY --from=download /git/ ${ROOT}

|

||||

RUN pip install --prefer-binary --no-cache-dir -r ${ROOT}/repositories/CodeFormer/requirements.txt

|

||||

|

||||

# TODO: move to top

|

||||

RUN apt-get install jq moreutils -y

|

||||

|

||||

|

||||

# Note: don't update the sha of previous versions because the install will take forever

|

||||

# instead, update the repo state in a later step

|

||||

|

||||

ARG SHA=f49c08ea566385db339c6628f65c3a121033f67c

|

||||

RUN <<EOF

|

||||

cd stable-diffusion-webui

|

||||

git pull --rebase

|

||||

git reset --hard ${SHA}

|

||||

pip install -r requirements_versions.txt

|

||||

EOF

|

||||

|

||||

RUN pip install opencv-python-headless \

|

||||

git+https://github.com/TencentARC/GFPGAN.git@8d2447a2d918f8eba5a4a01463fd48e45126a379 \

|

||||

git+https://github.com/openai/CLIP.git@d50d76daa670286dd6cacf3bcd80b5e4823fc8e1 \

|

||||

pyngrok

|

||||

|

||||

COPY --from=xformers /wheel.whl xformers-0.0.14.dev0-cp310-cp310-linux_x86_64.whl

|

||||

RUN pip install xformers-0.0.14.dev0-cp310-cp310-linux_x86_64.whl

|

||||

|

||||

COPY . /docker

|

||||

RUN <<EOF

|

||||

chmod +x /docker/mount.sh && python3 /docker/info.py ${ROOT}/modules/ui.py

|

||||

EOF

|

||||

|

||||

|

||||

ENV CLI_ARGS=""

|

||||

WORKDIR ${WORKDIR}

|

||||

EXPOSE 7860

|

||||

# run, -u to not buffer stdout / stderr

|

||||

CMD /docker/mount.sh && \

|

||||

python3 -u ../../webui.py --listen --port 7860 --ckpt-dir ${ROOT}/models/Stable-diffusion --theme dark ${CLI_ARGS}

|

||||

10

services/AUTOMATIC1111/config.json

Normal file

10

services/AUTOMATIC1111/config.json

Normal file

@@ -0,0 +1,10 @@

|

||||

{

|

||||

"outdir_samples": "/output",

|

||||

"outdir_txt2img_samples": "/output/txt2img-images",

|

||||

"outdir_img2img_samples": "/output/img2img-images",

|

||||

"outdir_extras_samples": "/output/extras-images",

|

||||

"outdir_txt2img_grids": "/output/txt2img-grids",

|

||||

"outdir_img2img_grids": "/output/img2img-grids",

|

||||

"outdir_save": "/output/saved",

|

||||

"font": "DejaVuSans.ttf"

|

||||

}

|

||||

@@ -7,7 +7,7 @@ file.write_text(

|

||||

.replace(' return demo', """

|

||||

with demo:

|

||||

gr.Markdown(

|

||||

'Created by [AUTOMATIC1111 / stable-diffusion-webui-docker](https://github.com/AbdBarho/stable-diffusion-webui-docker/tree/master/AUTOMATIC1111)'

|

||||

'Created by [AUTOMATIC1111 / stable-diffusion-webui-docker](https://github.com/AbdBarho/stable-diffusion-webui-docker/)'

|

||||

)

|

||||

return demo

|

||||

""", 1)

|

||||

48

services/AUTOMATIC1111/mount.sh

Executable file

48

services/AUTOMATIC1111/mount.sh

Executable file

@@ -0,0 +1,48 @@

|

||||

#!/bin/bash

|

||||

|

||||

set -Eeuo pipefail

|

||||

|

||||

mkdir -p /data/config/auto/

|

||||

cp -n /docker/config.json /data/config/auto/config.json

|

||||

jq '. * input' /data/config/auto/config.json /docker/config.json | sponge /data/config/auto/config.json

|

||||

|

||||

if [ ! -f /data/config/auto/ui-config.json ]; then

|

||||

echo '{}' >/data/config/auto/ui-config.json

|

||||

fi

|

||||

|

||||

declare -A MOUNTS

|

||||

|

||||

MOUNTS["/root/.cache"]="/data/.cache"

|

||||

|

||||

# main

|

||||

MOUNTS["${ROOT}/models/Stable-diffusion"]="/data/StableDiffusion"

|

||||

MOUNTS["${ROOT}/models/Codeformer"]="/data/Codeformer"

|

||||

MOUNTS["${ROOT}/models/GFPGAN"]="/data/GFPGAN"

|

||||

MOUNTS["${ROOT}/models/ESRGAN"]="/data/ESRGAN"

|

||||

MOUNTS["${ROOT}/models/BSRGAN"]="/data/BSRGAN"

|

||||

MOUNTS["${ROOT}/models/RealESRGAN"]="/data/RealESRGAN"

|

||||

MOUNTS["${ROOT}/models/SwinIR"]="/data/SwinIR"

|

||||

MOUNTS["${ROOT}/models/ScuNET"]="/data/ScuNET"

|

||||

MOUNTS["${ROOT}/models/LDSR"]="/data/LDSR"

|

||||

MOUNTS["${ROOT}/models/hypernetworks"]="/data/Hypernetworks"

|

||||

|

||||

MOUNTS["${ROOT}/embeddings"]="/data/embeddings"

|

||||

MOUNTS["${ROOT}/config.json"]="/data/config/auto/config.json"

|

||||

MOUNTS["${ROOT}/ui-config.json"]="/data/config/auto/ui-config.json"

|

||||

|

||||

# extra hacks

|

||||

MOUNTS["${ROOT}/repositories/CodeFormer/weights/facelib"]="/data/.cache"

|

||||

|

||||

for to_path in "${!MOUNTS[@]}"; do

|

||||

set -Eeuo pipefail

|

||||

from_path="${MOUNTS[${to_path}]}"

|

||||

rm -rf "${to_path}"

|

||||

if [ ! -f "$from_path" ]; then

|

||||

mkdir -vp "$from_path"

|

||||

fi

|

||||

mkdir -vp "$(dirname "${to_path}")"

|

||||

ln -sT "${from_path}" "${to_path}"

|

||||

echo Mounted $(basename "${from_path}")

|

||||

done

|

||||

|

||||

mkdir -p /output/saved /output/txt2img-images/ /output/img2img-images /output/extras-images/ /output/grids/ /output/txt2img-grids/ /output/img2img-grids/

|

||||

6

services/download/Dockerfile

Normal file

6

services/download/Dockerfile

Normal file

@@ -0,0 +1,6 @@

|

||||

FROM bash:alpine3.15

|

||||

|

||||

RUN apk add parallel aria2

|

||||

COPY . /docker

|

||||

RUN chmod +x /docker/download.sh

|

||||

ENTRYPOINT ["/docker/download.sh"]

|

||||

6

services/download/checksums.sha256

Normal file

6

services/download/checksums.sha256

Normal file

@@ -0,0 +1,6 @@

|

||||

fe4efff1e174c627256e44ec2991ba279b3816e364b49f9be2abc0b3ff3f8556 /data/StableDiffusion/model.ckpt

|

||||

e2cd4703ab14f4d01fd1383a8a8b266f9a5833dacee8e6a79d3bf21a1b6be5ad /data/GFPGAN/GFPGANv1.4.pth

|

||||

4fa0d38905f75ac06eb49a7951b426670021be3018265fd191d2125df9d682f1 /data/RealESRGAN/RealESRGAN_x4plus.pth

|

||||

f872d837d3c90ed2e05227bed711af5671a6fd1c9f7d7e91c911a61f155e99da /data/RealESRGAN/RealESRGAN_x4plus_anime_6B.pth

|

||||

c209caecac2f97b4bb8f4d726b70ac2ac9b35904b7fc99801e1f5e61f9210c13 /data/LDSR/model.ckpt

|

||||

9d6ad53c5dafeb07200fb712db14b813b527edd262bc80ea136777bdb41be2ba /data/LDSR/project.yaml

|

||||

29

services/download/download.sh

Executable file

29

services/download/download.sh

Executable file

@@ -0,0 +1,29 @@

|

||||

#!/usr/bin/env bash

|

||||

|

||||

set -Eeuo pipefail

|

||||

|

||||

mkdir -p /data/.cache /data/StableDiffusion /data/Codeformer /data/GFPGAN /data/ESRGAN /data/BSRGAN /data/RealESRGAN /data/SwinIR /data/LDSR /data/ScuNET /data/embeddings

|

||||

|

||||

echo "Downloading, this might take a while..."

|

||||

|

||||

aria2c --input-file /docker/links.txt --dir /data --continue

|

||||

|

||||

echo "Checking SHAs..."

|

||||

|

||||

parallel --will-cite -a /docker/checksums.sha256 "echo -n {} | sha256sum -c"

|

||||

|

||||

# aria2c already does hash check

|

||||

# cc6cb27103417325ff94f52b7a5d2dde45a7515b25c255d8e396c90014281516 /data/StableDiffusion/v1-5-pruned-emaonly.ckpt

|

||||

cat <<EOF

|

||||

By using this software, you agree to the following licenses:

|

||||

https://github.com/CompVis/stable-diffusion/blob/main/LICENSE

|

||||

https://github.com/AbdBarho/stable-diffusion-webui-docker/blob/master/LICENSE

|

||||

https://github.com/sd-webui/stable-diffusion-webui/blob/master/LICENSE

|

||||

https://github.com/invoke-ai/InvokeAI/blob/main/LICENSE

|

||||

https://github.com/cszn/BSRGAN/blob/main/LICENSE

|

||||

https://github.com/sczhou/CodeFormer/blob/master/LICENSE

|

||||

https://github.com/TencentARC/GFPGAN/blob/master/LICENSE

|

||||

https://github.com/xinntao/Real-ESRGAN/blob/master/LICENSE

|

||||

https://github.com/xinntao/ESRGAN/blob/master/LICENSE

|

||||

https://github.com/cszn/SCUNet/blob/main/LICENSE

|

||||

EOF

|

||||

20

services/download/links.txt

Normal file

20

services/download/links.txt

Normal file

@@ -0,0 +1,20 @@

|

||||

magnet:?xt=urn:btih:2daef5b5f63a16a9af9169a529b1a773fc452637&dn=v1-5-pruned-emaonly.ckpt&tr=udp%3a%2f%2ftracker.opentrackr.org%3a1337%2fannounce&tr=udp%3a%2f%2f9.rarbg.com%3a2810%2fannounce&tr=udp%3a%2f%2ftracker.openbittorrent.com%3a6969%2fannounce&tr=udp%3a%2f%2fopentracker.i2p.rocks%3a6969%2fannounce&tr=https%3a%2f%2fopentracker.i2p.rocks%3a443%2fannounce&tr=http%3a%2f%2ftracker.openbittorrent.com%3a80%2fannounce&tr=udp%3a%2f%2ftracker.torrent.eu.org%3a451%2fannounce&tr=udp%3a%2f%2fopen.stealth.si%3a80%2fannounce&tr=udp%3a%2f%2fvibe.sleepyinternetfun.xyz%3a1738%2fannounce&tr=udp%3a%2f%2ftracker2.dler.org%3a80%2fannounce&tr=udp%3a%2f%2ftracker1.bt.moack.co.kr%3a80%2fannounce&tr=udp%3a%2f%2ftracker.zemoj.com%3a6969%2fannounce&tr=udp%3a%2f%2ftracker.tiny-vps.com%3a6969%2fannounce&tr=udp%3a%2f%2ftracker.theoks.net%3a6969%2fannounce&tr=udp%3a%2f%2ftracker.publictracker.xyz%3a6969%2fannounce&tr=udp%3a%2f%2ftracker.monitorit4.me%3a6969%2fannounce&tr=udp%3a%2f%2ftracker.moeking.me%3a6969%2fannounce&tr=udp%3a%2f%2ftracker.lelux.fi%3a6969%2fannounce&tr=udp%3a%2f%2ftracker.dler.org%3a6969%2fannounce&tr=udp%3a%2f%2ftracker.army%3a6969%2fannounce

|

||||

select-file=1

|

||||

index-out=1=StableDiffusion/v1-5-pruned-emaonly.ckpt

|

||||

follow-torrent=mem

|

||||

follow-metalink=mem

|

||||

seed-time=0

|

||||

# this is the only way aria2c won't fail if the file already exists

|

||||

check-integrity=true

|

||||

https://drive.yerf.org/wl/?id=EBfTrmcCCUAGaQBXVIj5lJmEhjoP1tgl&mode=grid&download=1

|

||||

out=StableDiffusion/model.ckpt

|

||||

https://github.com/TencentARC/GFPGAN/releases/download/v1.3.4/GFPGANv1.4.pth

|

||||

out=GFPGAN/GFPGANv1.4.pth

|

||||

https://github.com/xinntao/Real-ESRGAN/releases/download/v0.1.0/RealESRGAN_x4plus.pth

|

||||

out=RealESRGAN/RealESRGAN_x4plus.pth

|

||||

https://github.com/xinntao/Real-ESRGAN/releases/download/v0.2.2.4/RealESRGAN_x4plus_anime_6B.pth

|

||||

out=RealESRGAN/RealESRGAN_x4plus_anime_6B.pth

|

||||

https://heibox.uni-heidelberg.de/f/31a76b13ea27482981b4/?dl=1

|

||||

out=LDSR/project.yaml

|

||||

https://heibox.uni-heidelberg.de/f/578df07c8fc04ffbadf3/?dl=1

|

||||

out=LDSR/model.ckpt

|

||||

48

services/hlky/Dockerfile

Normal file

48

services/hlky/Dockerfile

Normal file

@@ -0,0 +1,48 @@

|

||||

# syntax=docker/dockerfile:1

|

||||

|

||||

FROM continuumio/miniconda3:4.12.0

|

||||

|

||||

SHELL ["/bin/bash", "-ceuxo", "pipefail"]

|

||||

|

||||

ENV DEBIAN_FRONTEND=noninteractive

|

||||

|

||||

RUN conda install python=3.8.5 && conda clean -a -y

|

||||

RUN conda install pytorch==1.11.0 torchvision==0.12.0 cudatoolkit=11.3 -c pytorch && conda clean -a -y

|

||||

|

||||

RUN apt-get update && apt install fonts-dejavu-core rsync gcc -y && apt-get clean

|

||||

|

||||

|

||||

RUN <<EOF

|

||||

git config --global http.postBuffer 1048576000

|

||||

git clone https://github.com/sd-webui/stable-diffusion-webui.git stable-diffusion

|

||||

cd stable-diffusion

|

||||

git reset --hard 1a9c053cb7b6832695771db2555c0adc9b41e95f

|

||||

conda env update --file environment.yaml -n base

|

||||

conda clean -a -y

|

||||

EOF

|

||||

|

||||

|

||||

ARG BRANCH=dev SHA=8d1e42b9c50c747d056b0a98f3c2eb7652fb73a7

|

||||

RUN <<EOF

|

||||

cd stable-diffusion

|

||||

git fetch

|

||||

git checkout ${BRANCH}

|

||||

git reset --hard ${SHA}

|

||||

conda env update --file environment.yaml -n base

|

||||

conda clean -a -y

|

||||

EOF

|

||||

|

||||

# add info

|

||||

COPY . /docker/

|

||||

RUN <<EOF

|

||||

python /docker/info.py /stable-diffusion/frontend/frontend.py

|

||||

chmod +x /docker/mount.sh /docker/run.sh

|

||||

# streamlit

|

||||

sed -i -- 's/8501/7860/g' /stable-diffusion/.streamlit/config.toml

|

||||

EOF

|

||||

|

||||

WORKDIR /stable-diffusion

|

||||

ENV PYTHONPATH="${PYTHONPATH}:${PWD}" STREAMLIT_SERVER_HEADLESS=true USE_STREAMLIT=0 CLI_ARGS=""

|

||||

EXPOSE 7860

|

||||

|

||||

CMD /docker/mount.sh && /docker/run.sh

|

||||

31

services/hlky/mount.sh

Executable file

31

services/hlky/mount.sh

Executable file

@@ -0,0 +1,31 @@

|

||||

#!/bin/bash

|

||||

|

||||

set -Eeuo pipefail

|

||||

|

||||

declare -A MOUNTS

|

||||

|

||||

ROOT=/stable-diffusion/src

|

||||

|

||||

# cache

|

||||

MOUNTS["/root/.cache"]=/data/.cache

|

||||

# ui specific

|

||||

MOUNTS["${PWD}/models/realesrgan"]=/data/RealESRGAN

|

||||

MOUNTS["${PWD}/models/ldsr"]=/data/LDSR

|

||||

MOUNTS["${PWD}/models/custom"]=/data/StableDiffusion

|

||||

|

||||

# hack

|

||||

MOUNTS["${PWD}/models/gfpgan/GFPGANv1.3.pth"]=/data/GFPGAN/GFPGANv1.4.pth

|

||||

MOUNTS["${PWD}/models/gfpgan/GFPGANv1.4.pth"]=/data/GFPGAN/GFPGANv1.4.pth

|

||||

|

||||

|

||||

for to_path in "${!MOUNTS[@]}"; do

|

||||

set -Eeuo pipefail

|

||||

from_path="${MOUNTS[${to_path}]}"

|

||||

rm -rf "${to_path}"

|

||||

mkdir -p "$(dirname "${to_path}")"

|

||||

ln -sT "${from_path}" "${to_path}"

|

||||

echo Mounted $(basename "${from_path}")

|

||||

done

|

||||

|

||||

# streamlit config

|

||||

ln -sf /docker/userconfig_streamlit.yaml /stable-diffusion/configs/webui/userconfig_streamlit.yaml

|

||||

10

services/hlky/run.sh

Executable file

10

services/hlky/run.sh

Executable file

@@ -0,0 +1,10 @@

|

||||

#!/bin/bash

|

||||

|

||||

set -Eeuo pipefail

|

||||

|

||||

echo "USE_STREAMLIT = ${USE_STREAMLIT}"

|

||||

if [ "${USE_STREAMLIT}" == "1" ]; then

|

||||

python -u -m streamlit run scripts/webui_streamlit.py

|

||||

else

|

||||

python3 -u scripts/webui.py --outdir /output --ckpt /data/StableDiffusion/v1-5-pruned-emaonly.ckpt ${CLI_ARGS}

|

||||

fi

|

||||

9

services/hlky/userconfig_streamlit.yaml

Normal file

9

services/hlky/userconfig_streamlit.yaml

Normal file

@@ -0,0 +1,9 @@

|

||||

general:

|

||||

version: 1.20.0

|

||||

outdir: /output

|

||||

default_model: "Stable Diffusion v1.5"

|

||||

default_model_path: /data/StableDiffusion/v1-5-pruned-emaonly.ckpt

|

||||

outdir_txt2img: /output/txt2img-samples

|

||||

outdir_img2img: /output/img2img-samples

|

||||

outdir_img2txt: /output/img2txt

|

||||

optimized_turbo: True

|

||||

52

services/lstein/Dockerfile

Normal file

52

services/lstein/Dockerfile

Normal file

@@ -0,0 +1,52 @@

|

||||

# syntax=docker/dockerfile:1

|

||||

|

||||

FROM continuumio/miniconda3:4.12.0

|

||||

|

||||

SHELL ["/bin/bash", "-ceuxo", "pipefail"]

|

||||

|

||||

ENV DEBIAN_FRONTEND=noninteractive

|

||||

|

||||

# now it requires python3.9

|

||||

RUN conda install python=3.9 && conda clean -a -y

|

||||

RUN conda install pytorch==1.11.0 torchvision==0.12.0 cudatoolkit=11.3 -c pytorch && conda clean -a -y

|

||||

|

||||

RUN apt-get update && apt install fonts-dejavu-core rsync gcc -y && apt-get clean

|

||||

|

||||

ENV PIP_EXISTS_ACTION=w

|

||||

|

||||

RUN <<EOF

|

||||

git clone https://github.com/invoke-ai/InvokeAI.git stable-diffusion

|

||||

cd stable-diffusion

|

||||

git reset --hard 79e79b78aaeedb49afcc795e0e00eebfdbedee96

|

||||

git config --global http.postBuffer 1048576000

|

||||

conda env update --file environment.yml -n base

|

||||

conda clean -a -y

|

||||

EOF

|

||||

|

||||

|

||||

ARG BRANCH=development SHA=554445a985d970200095bbcb109273a49c462682

|

||||

RUN <<EOF

|

||||

cd stable-diffusion

|

||||

git fetch

|

||||

git reset --hard

|

||||

git checkout ${BRANCH}

|

||||

git reset --hard ${SHA}

|

||||

conda env update --file environment.yml -n base

|

||||

conda clean -a -y

|

||||

EOF

|

||||

|

||||

RUN pip uninstall opencv-python -y && pip install --prefer-binary --force-reinstall --no-cache-dir opencv-python-headless

|

||||

|

||||

COPY . /docker/

|

||||

RUN <<EOF

|

||||

python3 /docker/info.py /stable-diffusion/frontend/dist/index.html

|

||||

chmod +x /docker/mount.sh

|

||||

EOF

|

||||

|

||||

|

||||

ENV PRELOAD=false CLI_ARGS=""

|

||||

WORKDIR /stable-diffusion

|

||||

EXPOSE 7860

|

||||

|

||||

CMD /docker/mount.sh && \

|

||||

python3 -u scripts/invoke.py --outdir /output --web --host 0.0.0.0 --port 7860 ${CLI_ARGS}

|

||||

13

services/lstein/info.py

Normal file

13

services/lstein/info.py

Normal file

@@ -0,0 +1,13 @@

|

||||

import sys

|

||||

from pathlib import Path

|

||||

|

||||

file = Path(sys.argv[1])

|

||||

file.write_text(

|

||||

file.read_text()\

|

||||

.replace(' <div id="root"></div>', """

|

||||

<div id="root"></div>

|

||||

<div>

|

||||

Deployed with <a href="https://github.com/AbdBarho/stable-diffusion-webui-docker/">stable-diffusion-webui-docker</a>

|

||||

</div>

|

||||

""", 1)

|

||||

)

|

||||

29

services/lstein/mount.sh

Executable file

29

services/lstein/mount.sh

Executable file

@@ -0,0 +1,29 @@

|

||||

#!/bin/bash

|

||||

|

||||

set -Eeuo pipefail

|

||||

|

||||

declare -A MOUNTS

|

||||

|

||||

# cache

|

||||

MOUNTS["/root/.cache"]=/data/.cache

|

||||

# ui specific

|

||||

MOUNTS["${PWD}/models/ldm/stable-diffusion-v1/model.ckpt"]=/data/StableDiffusion/model.ckpt

|

||||

MOUNTS["${PWD}/src/gfpgan/experiments/pretrained_models/GFPGANv1.4.pth"]=/data/GFPGAN/GFPGANv1.4.pth

|

||||

MOUNTS["${PWD}/ldm/invoke/restoration/codeformer/weights"]=/data/Codeformer

|

||||

# hacks

|

||||

MOUNTS["/opt/conda/lib/python3.9/site-packages/facexlib/weights"]=/data/.cache

|

||||

MOUNTS["/opt/conda/lib/python3.9/site-packages/realesrgan/weights"]=/data/RealESRGAN

|

||||

MOUNTS["${PWD}/gfpgan/weights"]=/data/.cache

|

||||

|

||||

for to_path in "${!MOUNTS[@]}"; do

|

||||

set -Eeuo pipefail

|

||||

from_path="${MOUNTS[${to_path}]}"

|

||||

rm -rf "${to_path}"

|

||||

mkdir -p "$(dirname "${to_path}")"

|

||||

ln -sT "${from_path}" "${to_path}"

|

||||

echo Mounted $(basename "${from_path}")

|

||||

done

|

||||

|

||||

if "${PRELOAD}" == "true"; then

|

||||

python3 -u scripts/preload_models.py

|

||||

fi

|

||||

Reference in New Issue

Block a user